Artificial Intelligence is changing the way people work, learn, and build online tools. From chatbots and AI writers to smart search engines and automation software, AI is now everywhere! But behind all these powerful systems, there is one important technology that makes them work — the Large Language Model, also called LLM.

Today, many businesses, developers, and content creators use LLMs to generate text, answer questions, write code, summarize content, and even automate daily tasks. However, many beginners still ask an important question: What is LLM in AI and why is everyone talking about it?

In simple words, an LLM is a type of AI model trained on huge amounts of text data so it can understand and generate human-like language. These models can predict words, understand context, and create responses that sound natural and intelligent. Popular AI tools like OpenAI, Google Gemini, and Anthropic Claude all use advanced LLM technology.

LLMs are now becoming the backbone of modern AI applications, SaaS platforms, AI agents, customer support bots, automation systems, and productivity tools. They help businesses save time, improve user experience, and automate complex tasks with ease.

In this guide, you will learn what an LLM is, how it works, why it matters in AI, and how beginners can understand this technology without any technical confusion. By the end of this article, even a 10th class student will clearly understand the basics of Large Language Models in AI!

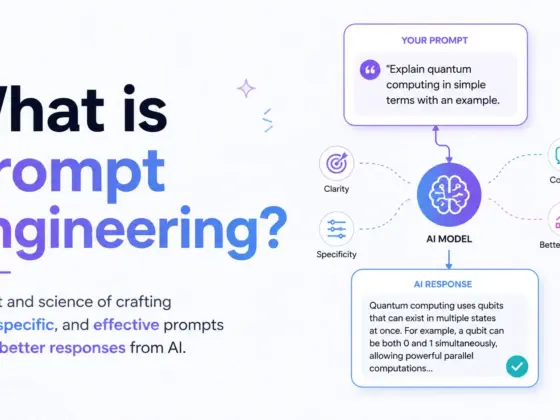

Understanding Structured Prompting

Structured output prompting is a technique used to make AI models generate responses in a fixed and organized format like JSON, XML, or YAML instead of normal human conversation. This method is very important in modern AI applications because apps, APIs, and automation systems need clean and machine-readable responses to work properly.

Normally, Large Language Models (LLMs) generate text in a natural conversational style. That works well for chatting with humans, but it becomes a big problem when developers want AI to interact with software systems. For example, if an AI chatbot returns extra text, missing fields, or broken formatting, the entire application workflow can fail!

This is where structured output prompting becomes useful. Developers give the AI a predefined structure or schema and instruct it to respond only in that format. The result becomes easier to parse, validate, and automate.

The most common structured formats are:

- JSON

- XML

- YAML

- CSV

- Markdown tables

Among these, JSON is the most popular because it is lightweight, easy to read, and supported by almost every programming language and API.

LLMs generate responses by predicting tokens probabilistically. That means the AI chooses the next word based on probability, not certainty. Because of this, outputs can vary every time unless the prompt strongly guides the structure and formatting.

Developers use structured AI responses to build:

- AI agents

- Automation workflows

- SaaS applications

- Chatbots

- API integrations

- Data extraction tools

- AI-powered dashboards

Without proper structure, AI outputs become unreliable for production-level systems.

1. Why Traditional Prompting Fails

Traditional prompting often creates unpredictable outputs because the AI tries to sound natural instead of following strict formatting rules. This causes many real-world problems in applications and automations.

Some common issues include:

- Hallucinated keys in JSON objects

- Inconsistent formatting between responses

- Extra explanations outside the expected format

- Broken syntax that crashes parsers

- Missing required fields

- Random text before or after JSON

For example, an app may expect this:

{

"name": "John",

"age": 25

}But the AI might respond like this instead:

Sure! Here is the data:

{

"name": "John",

"age": 25

}Even a small extra sentence can break automation pipelines or API integrations. This is why developers cannot rely on basic prompting alone for production systems.

2. What “Deterministic Output” Really Means

Deterministic output means the AI generates responses in a predictable and consistent structure every time. The focus is not only on correct information but also on formatting reliability.

A deterministic AI response should be:

- Predictable

- Parser-safe

- Repeatable

- Automation-ready

- Schema-compliant

This allows developers to directly connect AI with software systems without manually fixing outputs.

For example, a structured AI response can safely move through:

- APIs

- Databases

- Frontend applications

- Validation systems

- Workflow automations

Modern AI development is shifting toward schema-based prompting because businesses need reliable AI outputs that machines can understand easily. This is one of the biggest reasons why structured prompting has become a core part of advanced AI engineering.

Real-World Use Cases for Structured AI Outputs

Structured AI outputs are now used in almost every modern AI application. Businesses no longer use AI only for chatting or content writing. Today, AI is deeply connected with APIs, databases, automation workflows, and software systems. Because of this, properly formatted JSON responses have become extremely important.

Perfect JSON allows applications to process AI responses automatically without human correction. It improves reliability, reduces system errors, and makes AI integration much faster.

1. AI Agents and Automation

AI agents heavily depend on structured outputs to perform tasks correctly. These agents often communicate with multiple tools, APIs, and services in a single workflow.

For example, an autonomous AI agent may:

- Read user input

- Extract task details

- Call external APIs

- Store data in a database

- Trigger another automation

All these steps require clean and machine-readable responses.

Structured JSON helps in:

- Workflow orchestration

- Tool calling

- Autonomous task execution

- Multi-step automation

- API chaining

If the JSON structure breaks, the entire workflow can fail instantly. This is why structured prompting is critical for reliable AI agents.

Many modern automation platforms now integrate LLMs directly into workflows for:

- Email automation

- CRM updates

- AI customer support

- Task management

- Data extraction

- AI scheduling systems

Without deterministic outputs, these automations become unstable and unpredictable.

2. SaaS and Web Applications

SaaS platforms and web applications use structured AI responses to display data properly on the frontend and process backend operations safely.

For example, an AI-powered dashboard may require responses like:

{

"title": "Sales Report",

"revenue": 25000,

"growth": "18%"

}This structured data can directly render inside web applications without extra cleanup.

Structured outputs are commonly used for:

- Frontend rendering

- Dynamic UI generation

- Database insertion

- Validation pipelines

- Analytics dashboards

- Form processing

If AI returns inconsistent formatting, the application may crash or show incorrect information to users.

This is why developers prefer schema-based prompting techniques for production-grade SaaS products.

3. AI APIs and Function Calling

Modern AI APIs now provide dedicated features for structured responses because developers demand reliable outputs.

Popular AI providers like OpenAI API Platform support:

- Function calling

- JSON mode

- Structured outputs

- Schema validation

These features force the model to generate parser-friendly responses that applications can trust.

For example, function calling allows AI models to return structured arguments instead of plain text. This makes AI integrations more accurate and automation-ready.

Structured outputs are especially useful for:

- AI integrations

- Backend services

- API response handling

- Enterprise software

- AI workflow engines

As AI systems continue to grow, structured automation is becoming one of the most important parts of modern AI engineering. Businesses now prefer machine-readable AI outputs because they are safer, faster, and much easier to scale.

Why LLMs Still Break JSON

Large Language Models (LLMs) are powerful, but they are not naturally designed to generate perfect JSON every time. Their main goal is to predict the next token based on probability, not to follow strict programming rules. This is why AI often creates malformed JSON, inconsistent structures, or responses that break applications.

Even advanced AI models sometimes fail when developers ask for machine-readable outputs. A single missing comma or extra sentence can completely crash an automation workflow, API pipeline, or frontend application.

This problem becomes even more serious in:

- AI agents

- SaaS platforms

- Workflow automation

- API integrations

- Function calling systems

- Database pipelines

Understanding these common JSON issues helps developers create better prompts and more reliable AI systems.

1. Extra Text Outside JSON

One of the most common LLM JSON errors is adding unnecessary text before or after the JSON response.

For example, developers may ask:

“Return valid JSON only.”

But the AI still responds like this:

Sure! Here’s your JSON:

{

"name": "Alice",

"age": 24

}This looks harmless to humans, but parsers fail because the extra sentence is not valid JSON.

Other common problems include:

- Markdown wrappers

- Code fences

- Explanations

- Notes

- Comments outside objects

Bad example:

```json

{

"status": "success"

}

Many parsers cannot process markdown formatting directly. Developers then need additional cleanup logic, which increases complexity and bugs.

This is why deterministic prompting always requires:

- No explanations

- No markdown

- No extra text

- Pure parser-safe JSON only

### 2. Missing Quotes or Commas

Another major issue is syntax corruption. AI models sometimes generate invalid JSON syntax that machines cannot parse.

Malformed JSON examples:

```json id="6rtnw4"

{

name: "John",

age: 30

}Problem:

- Missing quotes around keys

Another example:

{

"name": "John"

"age": 30

}Problem:

- Missing comma between fields

Even small formatting mistakes create parser errors like:

- Unexpected token

- Invalid character

- JSON parse failed

- Unterminated object

- Missing delimiter

These issues become dangerous in production systems because one broken response may stop an entire workflow or automation chain.

LLMs generate text token-by-token, so long nested structures increase the chance of syntax mistakes.

3. Inconsistent Field Names

LLMs also struggle with consistent naming conventions. Sometimes the model changes field names even when instructions are clearly provided.

Example:

Expected:

{

"customer_name": "Rahul"

}AI output:

{

"name": "Rahul"

}This creates schema mismatch problems because applications expect exact field names.

Common issues include:

- Renamed keys

- Missing required properties

- Unexpected fields

- Different capitalization

- Random structure changes

For example:

{

"CustomerName": "Rahul"

}instead of:

{

"customer_name": "Rahul"

}Even tiny differences can break APIs, database inserts, and frontend rendering systems.

4. Nested Structure Errors

Nested JSON structures are even harder for LLMs to generate correctly. As complexity increases, AI models often create array and object mismatches.

Bad example:

{

"users": {

"name": "Aman",

"age": 25

}

}Problem:

- Expected an array but received an object

Correct version:

{

"users": [

{

"name": "Aman",

"age": 25

}

]

}Other common nested structure problems:

- Missing brackets

- Unclosed objects

- Array nesting mistakes

- Incorrect parent-child hierarchy

- Mixed data types

These issues become common when prompts are vague or schemas are not clearly defined.

Complex AI systems that use nested workflows require strict JSON validation to prevent automation failures.

Rules for Getting Reliable Structured Outputs

Getting deterministic AI outputs is not just about asking politely for JSON. Developers must use carefully designed prompting strategies that reduce ambiguity and guide the model toward stable, parser-safe responses.

Modern AI systems use schema enforcement techniques, validation pipelines, and structured prompting rules to improve reliability.

Here are the most important principles for reliable JSON generation.

1. Be Explicit About Output Format

The first rule of deterministic prompting is clarity. Never assume the AI understands your formatting expectations automatically.

Always give direct instructions like:

- Output ONLY valid JSON

- Do not include explanations

- Do not use markdown

- No code fences

- Return parser-safe JSON only

Weak prompt:

“Give me user data in JSON.”

Strong prompt:

“Output ONLY valid JSON. No markdown. No explanations. Follow the exact schema below.”

Clear instructions reduce hallucinations and formatting drift.

The more precise the instruction, the more stable the output becomes.

2. Provide Exact JSON Schema

A schema acts like a blueprint for the AI model. Without a schema, the model guesses the structure probabilistically.

A proper schema should define:

- Field names

- Required properties

- Data types

- Enum values

- Nested objects

- Arrays

- Validation rules

Example schema:

{

"name": "string",

"age": "number",

"status": ["active", "inactive"]

}This tells the model exactly what is expected.

Schemas improve:

- Consistency

- Predictability

- Validation accuracy

- Automation reliability

For complex systems, developers often use formal JSON Schema definitions to enforce strict output generation.

3. Use Few-Shot Examples

LLMs learn patterns very effectively through examples. This is called few-shot prompting.

Instead of only describing the format, show the model good and bad examples.

Good example:

{

"product": "Laptop",

"price": 50000

}Bad example:

Here is your product data:

{

"product": "Laptop"

}When AI sees multiple correct examples, it starts imitating the structure more accurately.

This works because LLMs are highly pattern-based systems.

Few-shot prompting is especially useful for:

- Nested JSON

- Complex schemas

- API responses

- Tool calling

- Structured automation

4. Limit Creativity Settings

Creativity settings directly affect output stability.

Higher creativity increases randomness, which also increases JSON errors.

Important settings include:

- Temperature

- top_p

- Frequency penalty

For deterministic generation:

- Use low temperature values like

0or0.1 - Keep top_p low when possible

Lower creativity makes the model more predictable and reduces hallucinations.

High temperature settings are useful for creative writing, but dangerous for structured generation.

Reliable automation systems usually prioritize consistency over creativity.

5. Force Strict Validation

Even with perfect prompting, AI can still make mistakes occasionally. This is why production systems always use validation layers.

Common validation strategies include:

- JSON parsers

- Schema validation

- Retry loops

- Automatic output repair

- Error correction pipelines

Typical workflow:

- Generate AI response

- Validate JSON

- Check schema compliance

- Retry if validation fails

- Repair malformed output if needed

This layered approach greatly improves reliability in enterprise AI systems.

Many modern AI frameworks now combine:

- Deterministic prompting

- Schema enforcement

- Function calling

- Validation pipelines

to create highly reliable structured AI responses.

As AI applications continue growing, structured generation and schema-based prompting are becoming essential skills for developers, AI engineers, and automation experts.

JSON Mode Explained

Modern Large Language Models (LLMs) now provide special features that help developers generate reliable and machine-readable JSON responses. These features are commonly called JSON Mode, structured outputs, or schema-guided generation.

The goal of JSON mode is simple: make AI responses predictable, parser-safe, and automation-friendly.

Without JSON mode, AI models often generate:

- Extra explanations

- Invalid syntax

- Broken formatting

- Missing fields

- Hallucinated keys

To solve these problems, major AI providers now offer built-in structured output systems.

These systems are heavily used in:

- AI agents

- SaaS applications

- Workflow automations

- Tool calling systems

- API integrations

- Enterprise AI platforms

1. OpenAI JSON Mode

OpenAI Platform introduced structured output features to improve reliability in AI applications.

One important feature is response_format.

Developers can force models to return valid JSON instead of natural language responses.

Example idea:

{

"response_format": {

"type": "json_object"

}

}This significantly reduces malformed JSON problems.

Benefits of OpenAI JSON mode:

- Strict JSON generation

- Cleaner API responses

- Better parser compatibility

- Reliable automation workflows

- Easier frontend integration

OpenAI also supports:

- Function calling

- Tool calling

- Structured outputs

- Schema validation

However, JSON mode still has some limitations:

- Very large nested structures may fail

- Complex schemas can still break occasionally

- Some models may add unexpected fields

- Validation is still recommended

Because of this, developers usually combine JSON mode with schema validators and retry systems.

2. Anthropic Structured Outputs

Anthropic Claude uses a different approach for structured AI responses.

Anthropic models are known for strong instruction-following behavior and controlled outputs.

Key structured features include:

- Tool use

- XML-based formatting

- Constrained outputs

- Structured response guidance

Many developers use XML structures with Claude because XML is easier to constrain for some workflows.

Example:

<response>

<name>Rahul</name>

<age>25</age>

</response>Anthropic’s tool use system allows AI to generate structured arguments for external functions and workflows.

This is extremely useful for:

- AI agents

- Workflow automation

- Enterprise systems

- Multi-step reasoning pipelines

Claude models are often preferred when developers need:

- Safer outputs

- Better instruction accuracy

- Reduced hallucinations

- Controlled formatting behavior

3. Gemini Structured Generation

Google Gemini API also supports structured generation features for developers building AI applications.

Gemini focuses heavily on schema-guided generation and response validation.

Key capabilities include:

- JSON schema guidance

- Structured response generation

- Validation-friendly outputs

- Tool integration support

Gemini models can generate structured responses based on predefined schemas, which improves consistency and reduces formatting errors.

This helps developers create:

- AI-powered apps

- Smart assistants

- Enterprise AI systems

- Data extraction pipelines

Google’s structured generation features are especially useful when applications require:

- Reliable JSON formatting

- Validation-ready outputs

- Multi-modal AI systems

- Production-grade integrations

4. Local Models and Open-Source LLMs

Open-source LLMs are also becoming powerful for structured output generation.

Popular local AI tools include:

These tools allow developers to run AI models locally on their own systems.

Many open-source frameworks now support:

- Constrained decoding

- Grammar enforcement

- JSON schema generation

- Structured prompting

Constrained decoding is a powerful technique where the model is mathematically restricted to generate only valid outputs.

This greatly reduces:

- Syntax corruption

- Invalid tokens

- Schema mismatch errors

Developers often combine local models with:

- Guardrails

- Validation pipelines

- Output repair systems

- Structured parsers

This approach is becoming popular for privacy-focused AI systems and enterprise deployments where cloud APIs are not preferred.

As structured AI workflows continue growing, JSON mode and schema-guided generation are becoming standard features across modern LLM ecosystems.

Using Pydantic for Schema Enforcement

Structured prompting becomes much more powerful when combined with validation systems. One of the best tools for this is Pydantic.

Pydantic helps developers validate AI outputs automatically and enforce strict schemas. It is now widely used in AI engineering, automation workflows, and production-grade LLM systems.

Many advanced AI applications use Pydantic to ensure that AI responses always follow the expected structure.

1. What Is Pydantic?

Pydantic Official Website is a Python validation library used for data parsing and schema enforcement.

It allows developers to define typed models using Python classes.

Pydantic automatically validates:

- Data types

- Required fields

- Nested objects

- Arrays

- Enums

- Constraints

Example:

from pydantic import BaseModel

class User(BaseModel):

name: str

age: intThis creates a strict schema for user data.

If invalid data is provided, Pydantic raises validation errors automatically.

This makes it extremely useful for structured AI outputs.

2. How Pydantic Improves AI Reliability

LLMs sometimes generate inconsistent or malformed responses. Pydantic helps solve this problem through automatic validation.

Benefits include:

- Strict field enforcement

- Automatic error detection

- Schema validation

- Retry handling

- Safer automation pipelines

For example, if AI returns:

{

"name": "Aman",

"age": "twenty"

}Pydantic immediately detects that "age" should be an integer, not a string.

This improves reliability in:

- APIs

- AI agents

- SaaS applications

- Backend systems

- Database workflows

Instead of blindly trusting AI outputs, developers can validate everything before processing.

3. Example Pydantic Model

Here is a practical validation example:

from pydantic import BaseModel

class Product(BaseModel):

name: str

price: float

in_stock: boolExpected AI response:

{

"name": "Laptop",

"price": 49999.99,

"in_stock": true

}Validation workflow:

- AI generates JSON

- Pydantic parses the response

- Invalid fields trigger errors

- System retries or repairs output

- Clean data moves into application

This workflow greatly improves production reliability.

4. Combining Pydantic with LLM APIs

Modern AI frameworks now combine Pydantic directly with LLM APIs.

One popular library is Instructor Library GitHub.

Instructor helps developers:

- Parse structured AI outputs

- Automatically validate responses

- Retry invalid generations

- Enforce schemas using Pydantic

This creates highly reliable AI pipelines with minimal manual parsing.

Typical workflow:

- Send prompt to LLM

- Define Pydantic schema

- Parse AI response automatically

- Retry if validation fails

- Return clean structured data

This method is becoming one of the most advanced approaches for schema enforcement AI systems.

Pydantic prompting is especially powerful for:

- AI agents

- Structured automation

- Enterprise APIs

- Data extraction tools

- Production AI systems

As AI applications become more complex, schema validation and typed structured outputs are becoming essential for safe and scalable AI development.

Copy-Paste Prompt Frameworks

Prompt templates make structured output prompting much easier and more reliable. Instead of writing prompts from scratch every time, developers use reusable frameworks that consistently generate parser-safe JSON responses.

These templates help reduce:

- Broken JSON

- Hallucinated keys

- Syntax errors

- Formatting inconsistency

- Automation failures

Below are some of the most effective JSON prompting templates used in real-world AI applications.

1. Basic JSON Prompt Template

This is the simplest structure for clean JSON generation.

Template:

You are a JSON generator.

Output ONLY valid JSON.

Do not include explanations.

Do not use markdown formatting.

Schema:

{

"name": "string",

"age": "number"

}Expected output:

{

"name": "Rahul",

"age": 24

}This template works well for:

- Simple APIs

- Forms

- User profiles

- Basic automation

2. Strict Schema Prompt Template

For production systems, developers use stricter instructions.

Template:

Return ONLY valid minified JSON.

Rules:

- No markdown

- No explanations

- No additional keys

- Follow schema exactly

- All fields are required

Schema:

{

"status": "success | failed",

"message": "string",

"code": "number"

}This reduces schema mismatch issues significantly.

Before:

Sure! Here is your response:

{

"status": "success"

}After:

{"status":"success","message":"Completed","code":200}This format is ideal for:

- APIs

- AI agents

- SaaS products

- Backend systems

3. Nested Object Prompt Template

Nested JSON structures require stronger guidance.

Template:

Output ONLY valid JSON.

Schema:

{

"customer": {

"name": "string",

"email": "string"

},

"orders": [

{

"id": "number",

"product": "string"

}

]

}Expected output:

{

"customer": {

"name": "Amit",

"email": "amit@example.com"

},

"orders": [

{

"id": 101,

"product": "Keyboard"

}

]

}This template is useful for:

- E-commerce systems

- CRM platforms

- Workflow orchestration

- Database insertion

4. Array Extraction Template

This template works well for extracting structured lists from text.

Template:

Extract all products from the text.

Return ONLY valid JSON array.

Format:

[

{

"product_name": "string",

"price": "number"

}

]Expected output:

[

{

"product_name": "Laptop",

"price": 55000

},

{

"product_name": "Mouse",

"price": 1200

}

]This is commonly used for:

- OCR systems

- Data extraction

- AI scraping

- Invoice processing

- Resume parsing

5. AI Agent Tool Response Template

AI agents require extremely strict formatting for tool execution.

Template:

You are an AI tool router.

Return ONLY valid JSON.

Schema:

{

"tool": "search | email | calendar",

"arguments": {

"query": "string"

}

}Expected output:

{

"tool": "search",

"arguments": {

"query": "Best AI tools"

}

}This helps AI agents safely interact with:

- APIs

- External tools

- Automation systems

- Multi-agent workflows

Developers often combine these templates with:

- Schema validators

- Pydantic

- Retry loops

- Output repair systems

to achieve near-perfect structured generation reliability.

Pro-Level Structured Prompting Strategies

Basic prompting is often not enough for enterprise-grade AI systems. Large production applications require advanced techniques to improve reliability, consistency, and parser safety.

Modern AI engineering now combines multiple strategies together to achieve near-perfect structured outputs.

1. Chain-of-Thought Hidden from Output

Chain-of-thought prompting helps models reason better internally before generating responses.

However, developers usually hide this reasoning from final outputs to maintain clean JSON formatting.

Typical approach:

- Internal reasoning allowed

- Final response restricted to JSON only

This improves:

- Accuracy

- Logical consistency

- Structured generation quality

without exposing extra text to parsers.

2. Constrained Decoding

Constrained decoding is one of the most advanced reliability techniques.

Instead of letting the AI generate any token freely, the decoding system mathematically limits allowed outputs.

Benefits:

- Prevents invalid syntax

- Blocks malformed JSON

- Reduces hallucinations

- Enforces grammar rules

This technique is widely used in:

- Enterprise AI systems

- Open-source LLM frameworks

- Production automation pipelines

3. Grammar-Based Generation

Grammar prompting forces the model to follow predefined syntax rules.

Developers define allowed structures using:

- JSON grammar

- XML grammar

- Context-free grammars

- Structured schemas

This greatly improves parser-safe generation.

Grammar-based systems are especially useful for:

- Tool calling

- AI agents

- Code generation

- Database workflows

4. Output Repair Pipelines

Even advanced models can occasionally fail. Output repair pipelines automatically fix malformed responses before applications process them.

Common repair strategies include:

- Missing comma correction

- Bracket balancing

- Quote insertion

- Schema cleanup

- Key normalization

This creates an additional safety layer for automation systems.

5. Multi-Step Validation Systems

Enterprise AI systems rarely trust a single AI response directly.

Instead, they use layered validation workflows.

Typical production workflow:

- Generate structured output

- Validate syntax

- Check schema compliance

- Repair errors if needed

- Retry generation if validation fails

- Approve final response

These guardrail systems dramatically improve reliability in large-scale AI deployments.

Modern production-grade AI applications combine:

- Deterministic prompting

- Constrained generation

- Grammar enforcement

- Validation pipelines

- Schema-based repair systems

to create highly reliable structured AI workflows that businesses can safely use at scale.

Tools and Libraries Developers Use

As structured AI outputs become more important, developers are now using specialized libraries and frameworks to improve reliability, schema validation, and deterministic generation. These tools help AI systems generate cleaner JSON, validate outputs automatically, and reduce automation failures.

Below are some of the most popular structured output libraries used in modern AI development.

1. Popular Structured AI Tools

Pydantic

Pydantic is one of the most widely used Python validation libraries for AI schema enforcement.

Main features:

- Data validation

- Typed schemas

- Automatic parsing

- Strict field enforcement

- Nested object support

It is excellent for validating AI-generated JSON responses before using them in applications.

Instructor

Instructor is a powerful library that combines LLM APIs with Pydantic models.

Benefits:

- Automatic retries

- Structured parsing

- Schema enforcement

- Cleaner AI integrations

It is very popular among developers building AI agents and automation systems.

Guardrails AI

Guardrails AI helps developers add safety and validation layers around LLM outputs.

Key capabilities:

- Output validation

- Guardrails enforcement

- Structured generation

- Error correction

It is commonly used in enterprise AI workflows.

LangChain

LangChain is a popular framework for building AI agents and multi-step workflows.

It supports:

- Structured output parsing

- Tool calling

- Agent orchestration

- Validation chains

Many SaaS AI applications use LangChain for production workflows.

Outlines

Outlines focuses on constrained generation and grammar-based structured outputs.

Main advantages:

- Grammar enforcement

- Reliable JSON generation

- Constrained decoding

- Deterministic responses

It is highly useful for advanced AI systems.

Marvin

Marvin simplifies AI engineering using Python-based structured interactions.

It helps developers create:

- Typed AI workflows

- Structured AI tasks

- Reliable parsing systems

DSPy

DSPy is an advanced framework for optimizing prompts and AI pipelines automatically.

It supports:

- Declarative prompting

- Structured optimization

- Modular AI workflows

- Reliable output systems

2. Which Tool Is Best for Beginners?

For beginners, Pydantic and Instructor are usually the best starting choices.

Why?

- Easy to learn

- Strong documentation

- Simple schema validation

- Works well with OpenAI APIs

- Minimal setup required

A beginner-friendly stack often looks like this:

- OpenAI API

- Pydantic

- Instructor

This combination is simple yet powerful for structured AI development.

3. Which Tool Is Best for Production Apps?

Production-grade AI applications usually require multiple layers of reliability.

A professional AI developer stack may include:

- LangChain

- Guardrails AI

- Pydantic

- Outlines

- Retry systems

- Validation pipelines

For enterprise-level reliability, constrained decoding and schema enforcement tools become extremely important.

Large AI systems often combine several frameworks together to create stable, scalable, and automation-ready workflows.