Artificial Intelligence is entering a new phase. For years, most AI systems were reactive — they waited for instructions, generated outputs, and stopped there. But in 2026, the industry is shifting toward something far more powerful: agentic AI.

Instead of simply answering prompts, modern autonomous AI agents can reason through problems, make decisions, use tools, access memory, and execute multi-step tasks with minimal human supervision. This evolution is transforming AI from a passive assistant into an active digital collaborator.

The rise of these intelligent AI systems is being driven by advances in large language models, long-context memory, multimodal capabilities, and AI orchestration frameworks. Businesses are no longer experimenting with isolated chatbots. They are building AI agents that can automate workflows, conduct research, write code, analyze data, manage operations, and even coordinate with other agents.

Table of Contents

- What Is Agentic AI?

- What Are Autonomous AI Agents?

- How AI Agents Work Step-by-Step

- Types of AI Agents

- What Is the ReAct Framework?

- How the ReAct Loop Works

- ReAct vs Chain-of-Thought Prompting

- What Are Agentic Workflows?

- Real-World Agentic Workflow Examples

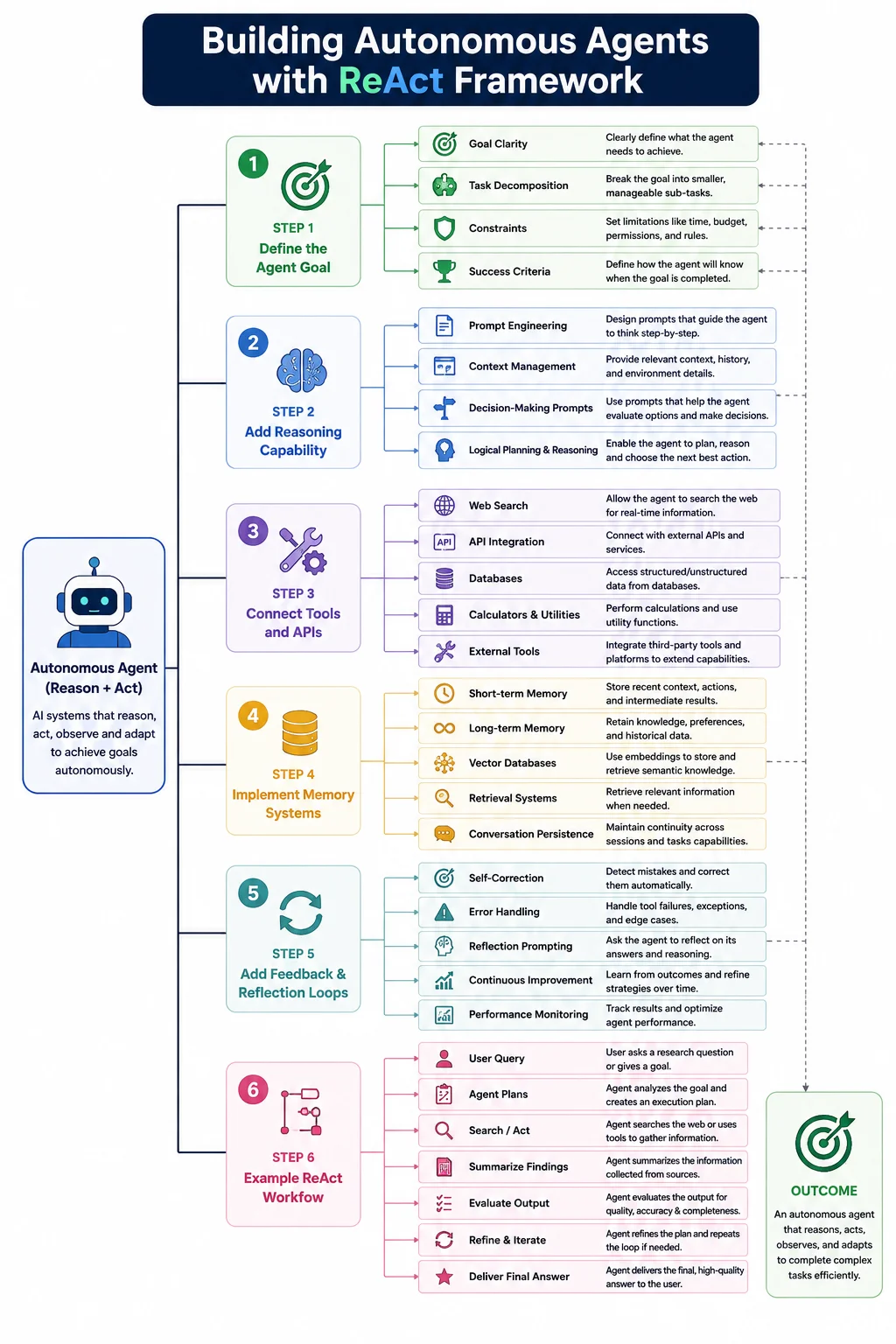

- Building Autonomous Agents with ReAct Framework

- Popular Frameworks for Agentic AI

- Challenges and Risks of Agentic AI

- Future of Agentic AI in 2026 and Beyond

- Best Practices for Building Reliable AI Agents

- Future of Agentic AI in 2026 and Beyond

- Conclusion

That shift is exactly why 2026 is becoming a defining year for agentic workflows. Companies across industries are investing heavily in AI-driven automation because traditional automation tools follow rigid rules, while AI agents can adapt dynamically to changing goals and environments. The difference is significant: rule-based automation executes instructions, but agentic systems can think through what to do next.

What Is Agentic AI?

Agentic AI refers to AI systems designed to operate with a higher level of autonomy, reasoning, and decision-making capability than traditional AI models. Instead of simply responding to a single prompt or command, these systems pursue goals, make plans, take actions, evaluate outcomes, and adapt their behavior dynamically.

In simple terms, traditional AI reacts. Agentic AI acts.

Most conventional AI tools work in a request-response format. You ask a chatbot a question, it generates an answer, and the interaction ends there. Even highly advanced language models often remain passive unless continuously guided by a user. They are powerful generators of content and predictions, but they typically do not independently decide what to do next.

Agentic AI changes that model completely.

An autonomous AI agent is built to achieve objectives rather than merely generate outputs. Instead of waiting for constant human direction, the system can break down a complex goal into smaller tasks, determine the best sequence of actions, use external tools, gather information, analyze feedback, and continue refining its approach until the objective is completed.

For example, a traditional AI assistant might help draft an email when instructed. A goal-driven AI agent, however, could manage the entire workflow — analyze incoming messages, prioritize responses, schedule meetings, retrieve documents, send follow-ups, and notify team members automatically. The difference lies in autonomy and continuous reasoning.

Agentic systems work differently.

An intelligent agent can receive a high-level goal like:

“Research competitors, summarize market trends, create a strategy report, and send it to the marketing team.”

Instead of requiring step-by-step commands, the AI breaks the task into smaller objectives, decides what tools to use, gathers information, evaluates results, and continues iterating until the task is complete.

This is what makes agentic AI fundamentally goal-oriented. These systems are built around goal-driven AI behavior rather than simple input-output interactions.

At the core of agentic AI is autonomous decision-making. The AI does not merely generate text — it plans workflows, reasons through uncertainty, chooses actions, monitors outcomes, and adjusts its strategy dynamically. In many cases, these autonomous systems can interact with APIs, databases, browsers, enterprise software, and other AI agents to complete real-world tasks.

Another major distinction is persistence. Traditional AI systems are session-based and reactive. Agentic AI systems maintain memory, context, objectives, and long-term workflows across multiple steps and environments.

This evolution is pushing AI beyond conversation into operational execution. Modern agentic systems can function as researchers, coding assistants, workflow managers, data analysts, cybersecurity monitors, customer support coordinators, and digital employees capable of handling increasingly complex tasks.

As large language models become more capable, agentic AI is emerging as the bridge between human intent and autonomous execution.

Core Components of Agentic AI

┌─────────────────────────────┐

│ AGENTIC AI SYSTEM │

│ (Autonomous Goal-Driven AI) │

└──────────────┬──────────────┘

│

┌───────────────────────┼───────────────────────┐

│ │ │

▼ ▼ ▼

┌─────────────────────┐ ┌─────────────────────┐ ┌─────────────────────┐

│ 1. PERCEPTION │ │ 2. REASONING & │ │ 3. ACTION & │

│ MODULE │ │ PLANNING MODULE │ │ EXECUTION MODULE │

└─────────┬───────────┘ └─────────┬───────────┘ └─────────┬───────────┘

│ │ │

┌─────┴─────┐ ┌─────┴─────┐ ┌─────┴─────┐

│ │ │ │ │ │

▼ ▼ ▼ ▼ ▼ ▼

┌───────┐ ┌───────┐ ┌───────┐ ┌───────┐ ┌───────┐ ┌───────┐

│Sensor │ │Input │ │ LLM / │ │Chain- │ │API │ │Tool │

│Input │ │Parsing│ │Reason-│ │of- │ │Calls │ │Use/ │

│(Text, │ │& │ │ing │ │Thought│ │ │ │Control│

│Image, │ │Embed- │ │Engine │ │(CoT) │ │ │ │(Code, │

│Audio) │ │ding │ │ │ │ │ │ │ │Robot) │

└───────┘ └───────┘ └───────┘ └───────┘ └───────┘ └───────┘

│

│

┌────────────────────────────────────────────────┼────┐

│ │ │

▼ ▼ ▼

┌─────────────────────┐ ┌─────────────────────────┐

│ 4. MEMORY & │◄───────────────────│ 5. LEARNING & │

│ STATE MODULE │ │ ADAPTATION MODULE │

└─────────┬───────────┘ └─────────┬───────────────┘

│ │

┌─────┴─────┐ ┌─────┴─────┐

│ │ │ │

▼ ▼ ▼ ▼

┌───────┐ ┌─────────┐ ┌─────────┐ ┌──────────┐

│Short- │ │Long- │ │Feedback │ │Reinforce-│

│term │ │term │ │Loop │ │ment │

│Memory │ │Memory │ │& Error │ │Learning │

│(STM) │ │(Vector │ │Correction│ │(RL) │

└───────┘ │ DB) │ └─────────┘ └──────────┘

└─────────┘

Agentic AI systems rely on several foundational capabilities working together in a continuous cycle. These components allow AI agents to behave more like adaptive problem-solvers rather than static software tools.

1. Memory Systems

One of the most important elements is memory. Advanced AI memory systems allow agents to retain context, remember previous interactions, store task progress, and reference historical information when making decisions.

Without memory, an AI agent behaves like a short-term conversational model. With memory, it can manage long-running workflows and improve consistency over time.

2. Planning Mechanisms

Planning enables AI agents to break large objectives into manageable steps. Modern planning agents can prioritize tasks, evaluate dependencies, estimate outcomes, and reorganize strategies dynamically when conditions change.

This gives agentic systems the ability to handle complex workflows instead of isolated instructions.

3. Reasoning Capabilities

Reasoning allows AI systems to analyze information logically before taking action. Through structured reasoning loops, agents can evaluate multiple options, identify risks, and decide which actions best support the original objective.

This reasoning layer is a critical part of autonomous intelligence because it reduces random or inefficient behavior.

4. Tool Usage

Modern AI agents are no longer limited to text generation. Advanced systems can use external tools such as search engines, APIs, databases, calculators, browsers, CRMs, and coding environments.

This capability — often described as AI tool use — dramatically expands what AI systems can accomplish in real-world business environments.

5. Action Execution

Once decisions are made, the AI must execute tasks. This may involve generating reports, sending emails, updating databases, deploying code, or interacting with enterprise software platforms automatically.

Execution transforms AI from an assistant into an operational system.

6. Feedback Loops

Agentic AI systems continuously evaluate outcomes through reasoning loops and feedback mechanisms. The AI observes results, measures progress toward goals, and refines future actions accordingly.

This iterative learning process is what makes agentic systems adaptive rather than static.

Why Agentic AI Matters in 2026

The importance of agentic AI in 2026 comes from a major industry transition: businesses are moving beyond chatbots toward fully autonomous systems.

Over the past few years, AI copilots became popular because they helped users write, summarize, and automate small tasks. But copilots still rely heavily on human direction. The next evolution is AI agents that can independently manage workflows from start to finish.

This shift is reshaping enterprise AI strategies across industries.

Companies are now deploying agentic systems for customer service automation, software development, cybersecurity operations, research analysis, sales outreach, workflow orchestration, and internal business operations. Instead of using AI as a productivity add-on, organizations are beginning to integrate AI into core operational infrastructure.

The productivity gains are substantial. Agentic systems can operate continuously, reduce repetitive work, accelerate decision-making, and coordinate complex digital tasks far faster than manual processes.

Another reason 2026 is pivotal is the growing maturity of frameworks like the ReAct framework 2026 ecosystem. These architectures combine reasoning, memory, and action execution in ways that make AI significantly more reliable and useful for enterprise-scale deployment.

At the same time, improvements in multimodal AI, long-context reasoning, vector memory databases, and tool orchestration platforms are enabling more capable and context-aware agents.

As a result, AI copilots are rapidly evolving into autonomous operational agents.

This transition represents more than another AI trend. It marks the beginning of a broader AI transformation where software no longer just assists humans — it actively collaborates, plans, and executes alongside them.

For businesses, developers, and technology leaders, agentic AI is becoming one of the most important foundations of next-generation AI systems.

What Are Autonomous AI Agents?

Autonomous AI agents are intelligent software systems designed to perform tasks independently by combining reasoning, planning, memory, and action execution. Unlike traditional AI applications that require constant human input, these agents can operate with a higher level of autonomy and adapt dynamically as situations change.

At a basic level, an AI assistant responds to instructions. An autonomous AI agent goes several steps further — it understands objectives, creates strategies, interacts with tools, evaluates results, and continuously works toward completing a goal.

For example, a standard chatbot may answer:

“Here’s how to create a marketing report.”

An autonomous agent could actually:

- Collect market data

- Analyze competitors

- Generate charts

- Write the report

- Email stakeholders

- Schedule follow-up actions

All with minimal human involvement.

This shift from passive response systems to active execution systems is one of the biggest transformations happening in artificial intelligence today.

A defining characteristic of autonomous AI agents is their ability to interact with environments. These environments may include:

- Web browsers

- APIs

- Enterprise software

- Databases

- Cloud platforms

- Messaging systems

- External applications

The agent observes information from these environments, makes decisions, and performs actions based on changing conditions.

Another important capability is dynamic task completion. Real-world tasks are rarely linear. Unexpected errors, missing information, or changing priorities often require adaptation. Autonomous agents can revise plans in real time instead of following rigid rule-based workflows.

This makes them especially valuable for modern business operations where flexibility matters.

Examples of autonomous AI agents: GitHub Copilot Agent, Google Gemini 3 with Browser Use, Google DeepMind’s SIMA 2, EY’s Agentic Platform, Cato Networks Ticket Classification Agent, UiPath Autopilot, Microsoft Copilot, Salesforce Einstein GPT, AutoGPT, BabyAGI, SuperAGI, MetaGPT, Devin (Cognition), SWE-agent, OpenDevin, GPTeam, Camel AI, ChatDev, Voyager, MineDojo, MineAgent, VoxPoser, SayCan, PaLM-E, RT-2, Octopus, AgentGPT, JARVIS (HuggingGPT), TaskWeaver, CrewAI, LangGraph, AutoGen, RPA bots (UiPath, Automation Anywhere, Blue Prism), Amazon Warehouse Robots, Tesla Optimus, Figure 01, 1X Technologies’ NEO, Boston Dynamics’ Spot with AI, Waymo Driver, Cruise AV, Zoox, Tesla FSD, Aurora Driver, Nuro, Oxa, Einride,

Many organizations are now building AI agents for:

- Customer support automation

- AI-powered research

- Workflow orchestration

- Sales automation

- Cybersecurity monitoring

- Software development assistance

- Data analysis

- Project management

As large language models improve, AI agents are becoming more capable of understanding context, handling ambiguity, and coordinating complex digital workflows.

In many ways, autonomous agents represent the next evolution of AI assistants — systems that no longer just support work, but actively participate in completing it.

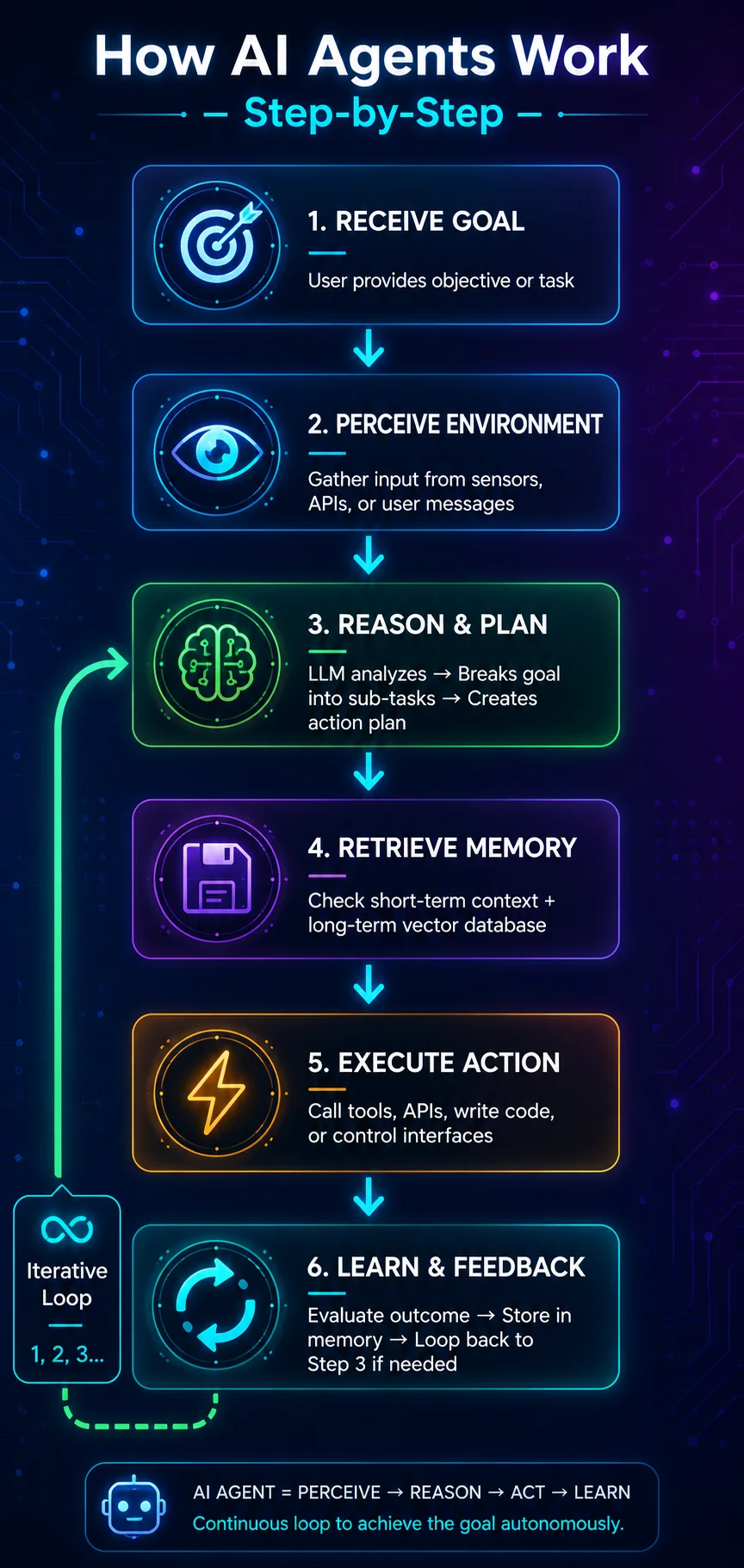

How AI Agents Work Step-by-Step

Behind every autonomous AI system is a continuous execution cycle that combines reasoning and action. This process is often called the AI execution loop.

Although implementations vary, most AI agents follow a similar workflow structure.

1. Receive a Goal

Everything begins with an objective.

The user, system, or organization provides a high-level goal such as:

- “Find qualified leads for our SaaS product.”

- “Monitor cybersecurity threats.”

- “Create a weekly financial summary.”

- “Book travel based on budget preferences.”

Instead of needing detailed instructions, the agent interprets the broader intent behind the request.

2. Analyze the Task

Next, the AI breaks the objective into smaller components.

This stage involves:

- Understanding constraints

- Identifying required information

- Determining dependencies

- Recognizing missing context

- Evaluating possible approaches

This reasoning phase is critical because it transforms vague human goals into structured workflows.

3. Plan Actions

After analysis, the AI creates an execution strategy.

In an AI planning workflow, the system may:

- Prioritize subtasks

- Choose tools

- Sequence actions logically

- Allocate resources

- Estimate outcomes

Advanced agents can even create backup plans if certain actions fail.

This planning capability separates modern agentic systems from simple automation tools.

4. Use Tools and APIs

Once the plan is ready, the AI begins interacting with external systems.

Modern AI agents can use:

- Search engines

- APIs

- CRMs

- Browsers

- Databases

- Spreadsheet tools

- Email systems

- Coding environments

This ability to access external resources dramatically expands agent functionality.

For instance, a research agent may search the web, collect data from APIs, summarize findings, and store results automatically.

5. Evaluate Outputs

After taking action, the AI evaluates results.

Did the search produce useful information?

Did the API request succeed?

Did the generated code work correctly?

This evaluation phase creates a feedback mechanism that improves decision quality.

6. Repeat the Loop

The process does not end after one action.

Through continuous reasoning and acting, the AI loops back:

- Reassess the situation

- Refine the plan

- Execute new actions

- Monitor outcomes

This iterative cycle continues until the goal is completed or the system determines additional human input is needed.

The result is a highly adaptive workflow engine capable of solving complex tasks in dynamic environments.

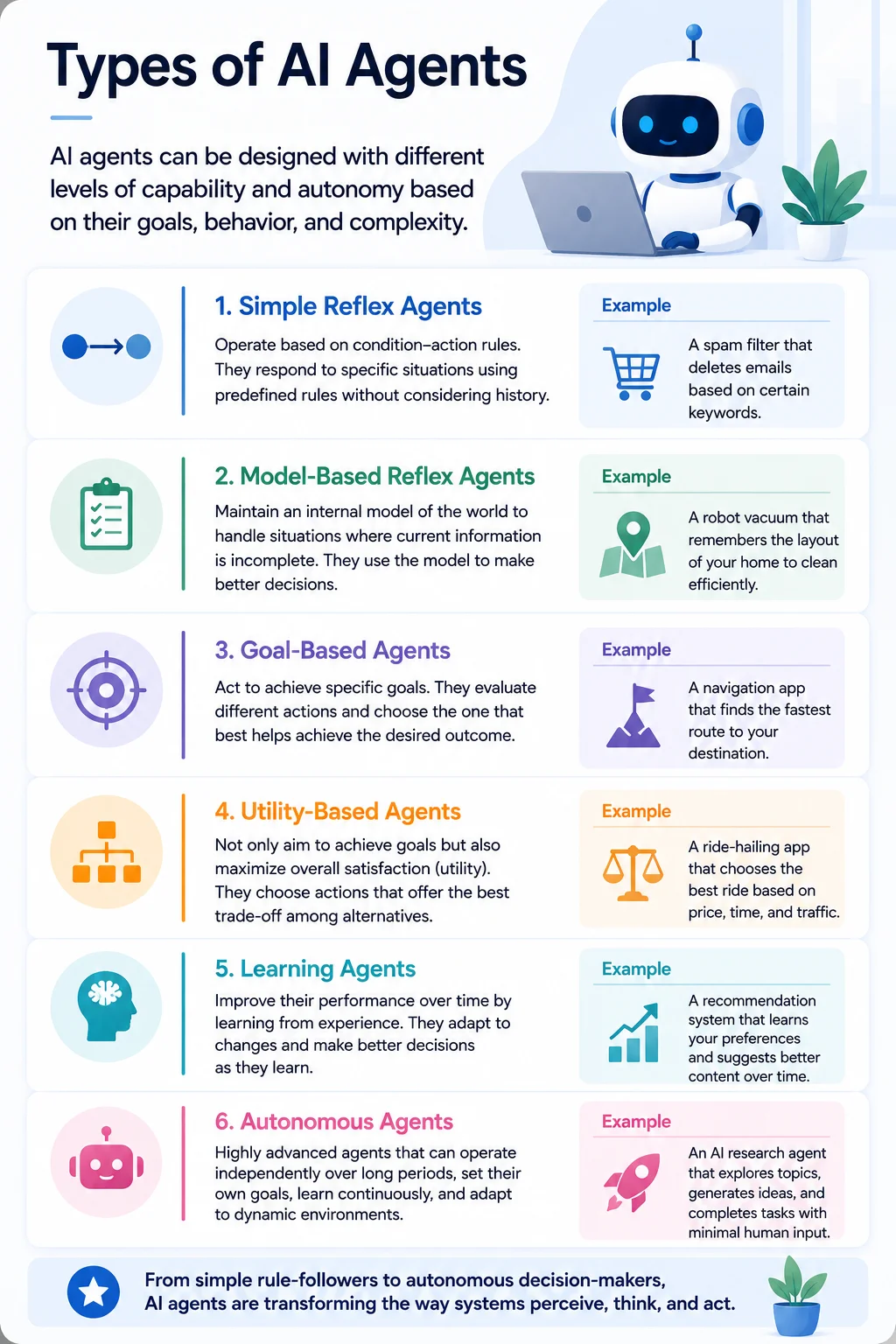

Types of AI Agents

Not all AI agents operate the same way. Different architectures are designed for different levels of intelligence, autonomy, and adaptability.

Understanding these categories helps explain how modern AI agent architecture is evolving.

1. Reactive Agents

Reactive agents are the simplest form of intelligent systems.

They respond directly to current inputs without maintaining long-term memory or deeper planning capabilities. These agents work well for predictable environments but struggle with complex reasoning tasks.

Examples include:

- Basic chatbots

- Rule-based automation systems

- Simple recommendation engines

They are fast and efficient but limited in adaptability.

2. Goal-Based Agents

Goal-based agents are designed around objectives rather than simple reactions.

These systems evaluate possible actions and choose behaviors that move them closer to a desired outcome.

For example:

- Route optimization systems

- AI scheduling assistants

- Autonomous workflow tools

This category forms the foundation of many modern agentic AI systems.

3. Learning Agents

Learning agents improve over time by analyzing outcomes and adapting behavior.

These agents can:

- Learn from feedback

- Refine decision-making

- Optimize workflows

- Improve predictions

Machine learning models and reinforcement learning techniques often power these systems.

Learning agents are particularly valuable in environments where conditions change frequently.

4. Multi-Agent Systems

In multi-agent systems, multiple AI agents collaborate to complete tasks.

Instead of relying on one large AI system, specialized agents handle different responsibilities such as:

- Research

- Planning

- Data analysis

- Communication

- Execution

These agents coordinate together like digital teams.

Multi-agent architectures are becoming increasingly important for enterprise-scale AI automation because they improve scalability and specialization.

5. Tool-Using Agents

One of the most powerful modern categories is tool-using agents.

These intelligent agents interact with external software, APIs, databases, and digital environments to perform real-world operations.

Examples include:

- AI coding agents

- Autonomous research systems

- AI business assistants

- Automated customer support agents

This capability transforms AI from a conversational system into an operational workforce layer.

As the field advances, the future of AI is increasingly moving toward interconnected ecosystems of intelligent, autonomous, and collaborative agents capable of handling sophisticated workflows across industries.

What Is the ReAct Framework?

The ReAct framework is one of the most important architectural approaches powering modern agentic AI systems. The name “ReAct” stands for Reason + Act, which perfectly describes how the framework operates: AI systems continuously reason about a problem and take actions to solve it.

Traditional large language models were primarily designed for text generation. They could answer questions, summarize content, or generate code, but they often struggled with complex tasks requiring multiple steps, external tools, or real-world decision-making.That limitation created a major challenge.

Even highly advanced models could produce convincing answers while still making logical mistakes, hallucinating information, or failing to verify results. Developers needed a way to improve reasoning reliability while allowing AI systems to interact dynamically with external environments.

The ReAct framework emerged as a solution to this problem.

Instead of treating reasoning and action as separate processes, ReAct combines them into a continuous workflow. The AI does not simply “think” internally and produce a final answer. It actively reasons through tasks while interacting with tools, gathering observations, evaluating results, and refining its strategy in real time.

This approach significantly improves the reliability and adaptability of autonomous AI systems.

One of the biggest innovations behind ReAct is its integration of chain-of-thought reasoning with actionable execution. In older prompting methods, AI models generated reasoning steps internally but remained disconnected from external systems. ReAct extends that idea by allowing the model to:

- Think through problems

- Perform actions

- Observe outcomes

- Continue reasoning based on new information

This creates a far more dynamic decision-making process.

For example, imagine an AI research agent asked to analyze competitors in a market.

A standard language model might rely only on its training data and generate a generic answer. A ReAct-powered system would:

- Reason about what information is needed

- Search the web

- Gather company data

- Analyze findings

- Compare results

- Refine conclusions

- Generate a detailed report

The system continuously alternates between reasoning and action until the objective is complete.

This architecture has become foundational for modern AI agents because it enables:

- Better problem-solving

- Improved factual accuracy

- Real-world tool usage

- Multi-step task execution

- Adaptive decision-making

Today, many advanced AI agents, research systems, coding assistants, and enterprise automation platforms rely on some variation of the AI reasoning framework introduced by ReAct.

How the ReAct Loop Works

At the core of the ReAct framework is a repeating cycle known as the reasoning-action loop. This loop allows AI systems to continuously analyze situations, perform actions, observe outcomes, and adapt dynamically.

The process typically follows four stages:

1. Thought

The AI begins by reasoning about the current situation.

This “thought” stage involves:

- Understanding the objective

- Evaluating available information

- Identifying missing data

- Planning the next best action

The model effectively creates an internal reasoning step before taking action.

For example:

“I need updated competitor pricing information before generating the report.”

This reasoning process helps the AI avoid random or inefficient actions.

2. Action

After reasoning, the system performs an action.

Depending on the task, the AI may:

- Search the web

- Query a database

- Call an API

- Execute code

- Use external software tools

- Retrieve documents

- Interact with enterprise systems

This is where ReAct differs dramatically from static prompting systems. The AI is not limited to generating text — it actively interacts with digital environments.

3. Observation

Once the action is completed, the AI receives observations.

These observations may include:

- Search results

- API responses

- Tool outputs

- Error messages

- User feedback

- Execution results

The AI then evaluates whether the action moved it closer to the goal.

This creates powerful AI feedback loops that improve adaptability and accuracy.

4. Repeat the Cycle

The loop repeats continuously until the task is complete.

The system reasons again based on new observations:

- Should another tool be used?

- Is additional data required?

- Did an error occur?

- Does the plan need adjustment?

This iterative behavior is what makes ReAct-based systems highly effective for complex workflows.

Unlike static AI responses, these iterative AI systems can dynamically refine strategies in real time.

The result is a more intelligent and flexible approach to automation where AI behaves less like a chatbot and more like an autonomous problem-solving engine.

ReAct Framework Architecture

The ReAct framework is not a single model. It is an architectural pattern made up of multiple interconnected layers working together.

Modern ReAct-based systems typically include the following components:

1. The LLM Brain

At the center is the large language model itself.

The LLM acts as the reasoning engine responsible for:

- Understanding instructions

- Generating plans

- Interpreting observations

- Making decisions

- Coordinating actions

This “brain” provides the cognitive capabilities of the agent.

2. Tool Layer

The tool layer gives the AI access to external systems and capabilities.

These tools may include:

- Web search engines

- APIs

- Databases

- Browsers

- CRMs

- Calculators

- Code interpreters

- Enterprise software

Without tools, the AI remains limited to text generation. With tools, it becomes operationally useful.

This layer is essential for modern AI orchestration systems.

3. Memory Layer

The memory layer stores context and historical information.

This may include:

- Previous interactions

- Task progress

- User preferences

- Retrieved documents

- Long-term objectives

Memory allows agents to maintain continuity across complex workflows.

Advanced systems often combine short-term conversational memory with long-term vector database storage.

4. Orchestration Layer

The orchestration layer coordinates workflows between reasoning, tools, memory, and execution systems.

This layer manages:

- Task routing

- Agent coordination

- Tool selection

- Workflow sequencing

- Error handling

In enterprise environments, orchestration is critical for scaling autonomous systems safely and efficiently.

5. Execution Engine

The execution engine performs the actual operations requested by the AI.

Examples include:

- Running code

- Sending emails

- Updating systems

- Triggering automations

- Processing files

- Deploying workflows

This transforms the framework from a reasoning system into a real-world AI workflow system capable of operational execution.

Together, these layers form a complete agent architecture designed for intelligent, adaptive automation.

ReAct vs Chain-of-Thought Prompting

At first glance, ReAct and chain-of-thought prompting may appear similar because both involve reasoning processes inside large language models. However, their capabilities differ significantly.

Chain-of-thought prompting focuses on structured internal reasoning. The AI generates intermediate thinking steps before producing a final answer.

For example:

“First calculate X, then compare Y, then derive the conclusion.”

This technique improves logical consistency and problem-solving accuracy compared to direct prompting.

However, chain-of-thought reasoning is still largely static.

The AI reasons internally but does not interact with external systems, verify information dynamically, or adapt based on real-world observations.

The ReAct framework extends this concept dramatically.

Instead of reasoning in isolation, ReAct systems combine reasoning with active execution. The AI can:

- Use tools

- Retrieve live information

- Execute actions

- Evaluate results

- Adapt dynamically

This creates a far more powerful form of reasoning AI.

Another key difference is environmental interaction.

Chain-of-thought systems operate mainly within the boundaries of the model itself. ReAct-based agents operate across digital environments, APIs, workflows, and enterprise systems.

This enables:

- Real-time decision-making

- Dynamic workflow execution

- Multi-step automation

- Error correction

- Continuous adaptation

In practical terms, chain-of-thought prompting helps AI think better. ReAct helps AI think and act effectively in the real world.

That distinction is why ReAct has become a foundational architecture for autonomous AI agents, enterprise automation systems, and next-generation intelligent workflows.

What Are Agentic Workflows?

Agentic workflows are AI-driven operational processes where autonomous agents can reason, plan, make decisions, and execute tasks dynamically with minimal human supervision. Unlike traditional automation pipelines that follow fixed instructions, agentic workflows are adaptive systems capable of handling uncertainty, changing goals, and multi-step problem-solving.

At their core, these workflows combine workflow automation with AI reasoning.

In older automation systems, every possible step had to be predefined manually. If something unexpected happened, the workflow often failed or required human intervention. Agentic systems work differently. They can analyze situations, adjust strategies, and decide what actions to take next based on real-time information.

This is one of the biggest shifts happening in modern AI automation.

Instead of simply automating repetitive tasks, organizations are now building systems that can autonomously manage entire operational processes.

For example, imagine a company using an AI-driven content workflow.

A traditional automation setup might:

- Trigger article generation

- Send drafts for approval

- Publish content on schedule

An agentic workflow could go much further:

- Research trending topics

- Analyze competitor content

- Identify keyword opportunities

- Generate SEO briefs

- Write optimized drafts

- Edit tone and structure

- Create social media content

- Monitor performance metrics

- Refine future strategies

All while adapting continuously to performance data and business goals.

The defining feature of these workflows is goal-oriented execution. The AI is not limited to isolated commands. Instead, it operates around objectives and continuously works toward achieving them through reasoning and action loops.

Modern agentic systems also support multi-step autonomous actions. Tasks can span multiple tools, platforms, and decision points while maintaining context throughout the process.

This capability is becoming increasingly important as businesses adopt more complex digital ecosystems involving APIs, cloud software, databases, communication platforms, and enterprise applications.

Another major advantage is scalability. Once properly orchestrated, agentic workflows can coordinate multiple AI agents simultaneously across departments and operational systems.

As a result, companies are beginning to treat AI agents less like software features and more like digital workforce infrastructure.

Traditional Automation vs Agentic Workflows

To understand why agentic workflows matter, it helps to compare them with traditional automation systems.

For years, businesses relied on rule-based automation tools. These systems followed predefined logic such as:

“If X happens, then execute Y.”

This approach works well for predictable tasks with fixed structures. Examples include:

- Sending automated emails

- Processing invoices

- Updating spreadsheets

- Triggering notifications

- Routing customer tickets

However, traditional automation struggles when situations become unpredictable or require reasoning.

If the workflow encounters:

- Missing information

- Ambiguous requests

- Unexpected errors

- Changing priorities

- Complex decision-making

The system usually stops or requires human intervention.

This limitation is exactly where agentic systems outperform conventional automation.

Agentic workflows introduce adaptive intelligence into operational processes. Instead of following rigid scripts, the AI evaluates situations dynamically and determines the best course of action.

This is the foundation of modern intelligent automation.

For example, a traditional customer support automation system may route tickets based on keywords. An agentic workflow could:

- Understand customer intent

- Analyze emotional tone

- Search internal documentation

- Retrieve account history

- Suggest personalized solutions

- Escalate critical cases automatically

The difference is not just automation — it is contextual decision-making.

Another major distinction is workflow flexibility.

Traditional systems are linear:

- Execute step A

- Move to step B

- Complete step C

Agentic systems are non-linear and adaptive.

They can:

- Revise plans

- Retry failed actions

- Switch tools

- Gather additional information

- Coordinate with other agents

- Optimize execution paths dynamically

This adaptability is driving the rise of advanced AI orchestration platforms capable of managing large-scale autonomous operations.

Agentic workflows also improve resilience. Since the AI continuously evaluates outcomes, workflows can recover from errors more effectively instead of failing completely.

As businesses move toward increasingly digital operations, these adaptive workflows are becoming essential for handling complexity at scale.

Real-World Agentic Workflow Examples

The practical applications of agentic workflows are expanding rapidly across industries. Organizations are already deploying AI agents to automate research, operations, customer engagement, software development, and strategic decision-making.

Here are some of the most impactful real-world examples.

1. AI Research Assistants

AI research agents can autonomously:

- Search the web

- Analyze sources

- Summarize findings

- Compare competitors

- Generate reports

- Track industry trends

Instead of manually collecting information, businesses can deploy AI systems that continuously monitor and synthesize data in real time.

This is becoming one of the fastest-growing AI use cases in enterprise environments.

2. AI Coding Agents

Modern AI coding agents can:

- Write code

- Debug applications

- Test software

- Analyze repositories

- Generate documentation

- Deploy updates

Some advanced systems even collaborate with human developers through continuous reasoning and iterative execution cycles.

These workflows are dramatically accelerating software development productivity.

3. AI Customer Support Systems

Agentic customer support systems go beyond simple chatbots.

They can:

- Understand complex customer requests

- Access account data

- Retrieve documentation

- Resolve issues autonomously

- Escalate sensitive cases intelligently

- Learn from previous interactions

This creates more efficient and personalized support experiences.

4. AI Marketing Automation

AI-driven marketing workflows are becoming highly sophisticated.

Autonomous marketing agents can:

- Analyze trends

- Identify keyword opportunities

- Generate campaign ideas

- Create content

- Run A/B tests

- Monitor analytics

- Optimize ad performance

These systems continuously refine strategies based on real-time feedback and engagement data.

5. AI Operations Workflows

Many enterprises are deploying agentic systems for operational management.

Examples include:

- Supply chain monitoring

- Financial reporting

- Cybersecurity analysis

- IT infrastructure management

- Workflow scheduling

- Compliance monitoring

These autonomous business systems help organizations reduce operational overhead while improving speed and scalability.

As AI infrastructure continues evolving, the number of possible AI workflow examples is growing rapidly. Businesses are moving from isolated automation tools toward interconnected ecosystems of intelligent agents capable of coordinating complex digital operations.

This transition is laying the foundation for the next generation of autonomous business AI.

Building Autonomous Agents with ReAct Framework

Building autonomous AI agents is no longer limited to large research labs or enterprise AI teams. With modern large language models, orchestration frameworks, APIs, and memory systems, developers can now create sophisticated agentic systems capable of reasoning, planning, and executing complex workflows.

The ReAct framework plays a central role in this process because it enables AI systems to continuously alternate between reasoning and action. Instead of producing isolated responses, the agent evaluates goals, uses tools, observes results, and refines decisions dynamically.

Creating a successful autonomous agent requires more than simply connecting an LLM to a chatbot interface. The real challenge lies in designing an intelligent workflow architecture that combines planning, memory, tool usage, and feedback systems effectively.

Here is a step-by-step breakdown of how modern ReAct-powered agents are built.

Step 1: Define the Agent Goal

Every autonomous system starts with a clearly defined objective.

Without goal clarity, even advanced AI agents can become inefficient, inconsistent, or directionless. The better the objective is structured, the more effectively the agent can reason about tasks and execute workflows.

For example, compare these two instructions:

❌ “Help improve our marketing.”

✅ “Analyze competitors, identify high-performing SEO keywords, generate a content strategy, and create a weekly performance report.”

The second objective provides:

- Clear deliverables

- Defined outcomes

- Measurable expectations

- Actionable structure

This is essential for effective AI task planning.

Once the primary objective is defined, the next step is task decomposition.

Autonomous agents work best when large goals are broken into smaller operational steps. A research agent, for instance, may divide its workflow into:

- Data collection

- Source validation

- Information summarization

- Comparative analysis

- Report generation

This decomposition process improves reasoning efficiency and reduces execution errors.

Constraints also matter significantly.

Developers must define:

- Budget limitations

- API access rules

- Time restrictions

- Safety boundaries

- Permission levels

- Tool usage limits

These constraints help the system operate predictably while minimizing unwanted behavior.

In advanced systems, agents can even prioritize objectives dynamically based on urgency, risk, or resource availability.

The quality of an autonomous system often depends more on goal architecture than model size alone. Strong autonomous agent goals create the foundation for reliable reasoning and execution.

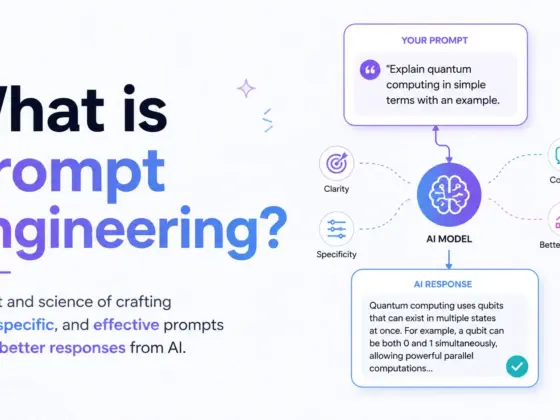

Step 2: Add Reasoning Capability

Reasoning is the intelligence layer that transforms automation into agentic behavior.

Without reasoning, AI systems simply follow instructions mechanically. With reasoning, they can evaluate situations, make decisions, and adapt dynamically.

This is where prompt engineering becomes critically important.

Modern reasoning AI systems rely heavily on structured prompts that guide how the model thinks before acting. Instead of asking the AI for a direct answer, developers design prompts that encourage:

- Step-by-step reasoning

- Planning

- Reflection

- Decision evaluation

- Tool selection

For example, a ReAct-style prompt might instruct the model to:

- Analyze the problem

- Determine missing information

- Choose appropriate tools

- Execute actions

- Evaluate results

- Continue until the objective is complete

This creates a controlled reasoning loop rather than a one-time response.

Another key component is context management.

Large language models are highly sensitive to context quality. Autonomous agents need structured context windows containing:

- User objectives

- Historical actions

- Retrieved knowledge

- Memory summaries

- Environmental observations

- Workflow status

Poor context management often leads to hallucinations, repetitive actions, or reasoning failures.

Advanced systems also use decision-making prompts that help the AI evaluate trade-offs and prioritize actions intelligently.

For example:

- Should the AI search for more information?

- Is the current answer reliable enough?

- Should another tool be used?

- Is escalation necessary?

These prompts create more adaptive and reliable AI thinking systems capable of handling real-world complexity.

As models improve, reasoning architecture is becoming one of the most important competitive advantages in agent development.

Step 3: Connect Tools and APIs

A language model without tools is limited to text generation. A language model connected to external systems becomes an operational AI agent.

Tool integration is one of the most important capabilities in modern agentic architectures.

Through AI tool calling, agents can interact with:

- Search engines

- APIs

- Databases

- File systems

- Browsers

- Calculators

- Enterprise platforms

- Cloud applications

- Automation services

This dramatically expands what the AI can accomplish.

For example, an autonomous research agent may:

- Search the web for recent information

- Extract structured data from APIs

- Store findings in a database

- Generate reports automatically

A coding agent may:

- Access repositories

- Execute code

- Run tests

- Debug applications

- Deploy updates

Modern AI systems increasingly depend on large interconnected tool ecosystems.

One of the biggest advantages of external tool integration is access to real-time information. Since language models have knowledge limitations, APIs and retrieval systems allow agents to work with current data dynamically.

Strong API integration also enables enterprise connectivity.

AI agents can interact with:

- CRM systems

- ERP platforms

- Customer databases

- Project management tools

- Security infrastructure

- Financial software

This transforms autonomous agents into scalable workflow engines rather than isolated chat interfaces.

As the AI tools ecosystem continues expanding, orchestration platforms are becoming essential for managing permissions, execution reliability, monitoring, and security.

Step 4: Implement Memory Systems

Memory is what gives autonomous agents continuity and persistence.

Without memory, every interaction becomes isolated. The agent forgets previous decisions, loses workflow progress, and struggles with long-running tasks.

Modern AI memory systems are typically divided into two categories:

Short-Term Memory

Short-term memory stores active conversational context and recent workflow activity.

This includes:

- Current objectives

- Recent tool outputs

- Immediate task history

- Active user instructions

Short-term memory allows the agent to maintain coherent reasoning during ongoing workflows.

Long-Term Memory

Long-term memory enables persistent learning and historical context retention.

This may include:

- User preferences

- Previous conversations

- Organizational knowledge

- Past decisions

- Workflow histories

- Retrieved documents

Long-term persistence dramatically improves personalization and operational consistency.

Many advanced systems use vector databases for memory retrieval.

Instead of storing information as simple text records, vector systems encode data into embeddings that allow semantic search. This means the AI can retrieve context based on meaning rather than exact keywords.

For example, an AI assistant could remember:

- Previous customer interactions

- Coding preferences

- Research topics

- Business strategies

- Historical workflows

Even months later.

Modern retrieval systems often combine:

- Embedding models

- Semantic search

- Context compression

- Knowledge retrieval pipelines

These retrieval systems are becoming foundational infrastructure for scalable agentic AI.

As autonomous systems become more complex, memory architecture is increasingly important for maintaining reasoning quality and operational reliability.

Step 5: Add Feedback and Reflection Loops

One of the most powerful aspects of ReAct-based systems is their ability to improve through continuous feedback.

Static AI systems generate answers once and stop. Agentic systems evaluate results, identify mistakes, and refine future behavior dynamically.

This creates adaptive intelligence.

Self-correction mechanisms help agents detect:

- Incorrect outputs

- Failed tool calls

- Missing information

- Inconsistent reasoning

- Unsafe actions

For example, if a web search produces low-quality results, the AI can automatically retry using different queries.

Reflection prompting is another major technique used in advanced agent design.

In AI self-reflection workflows, the model is prompted to critique its own outputs before finalizing responses.

Example reflection prompts may include:

- “Did the answer fully address the objective?”

- “Are there logical inconsistencies?”

- “Should additional evidence be gathered?”

- “Could this solution fail under certain conditions?”

These reflection stages significantly improve reasoning reliability.

Feedback loops also support continuous optimization.

Enterprise systems increasingly use monitoring frameworks that track:

- Accuracy

- Efficiency

- Tool usage

- Error rates

- Completion quality

- Workflow performance

This operational data helps agents improve over time through reinforcement signals and iterative adjustments.

Strong feedback loops are essential for creating systems capable of autonomous improvement at scale.

Example ReAct Workflow

To understand how all these components work together, consider a practical ReAct workflow example.

Imagine a user asks:

“Research the latest AI agent trends in healthcare and generate a strategic summary.”

A ReAct-powered agent may execute the following workflow:

Step 1: Analyze the Goal

The AI identifies the objective:

- Gather current healthcare AI trends

- Focus on AI agents

- Produce a strategic report

Step 2: Create a Plan

The agent decides to:

- Search recent sources

- Compare industry developments

- Extract key insights

- Summarize findings

- Validate information quality

Step 3: Use External Tools

The agent:

- Searches the web

- Accesses research databases

- Retrieves industry reports

- Extracts relevant data

This is where AI orchestration becomes important because multiple tools may operate simultaneously.

Step 4: Observe Results

The system evaluates:

- Source credibility

- Information relevance

- Data consistency

- Missing insights

Step 5: Refine the Workflow

If gaps exist, the AI performs additional searches or adjusts the strategy dynamically.

Step 6: Generate Final Output

The system produces:

- A structured report

- Trend analysis

- Strategic recommendations

- Supporting insights

This entire process demonstrates how autonomous workflows combine reasoning, planning, tool usage, memory, and feedback into a continuous intelligent execution loop.

That capability is what makes the ReAct framework one of the most important foundations of next-generation autonomous AI systems.

Popular Frameworks for Agentic AI

As agentic AI continues evolving, several powerful frameworks have emerged to help developers build autonomous agents, multi-agent systems, and intelligent workflow orchestration platforms. These frameworks simplify tasks such as reasoning loops, tool integration, memory management, agent communication, and execution control.

In 2026, platforms like LangChain, CrewAI, Microsoft AutoGen, and the OpenAI agent ecosystem are becoming foundational technologies for building next-generation autonomous systems.

1. LangChain Agents

Among modern AI development platforms, LangChain agents have become one of the most widely adopted frameworks for building autonomous AI workflows.

LangChain was originally designed to help developers connect large language models with external tools, APIs, databases, and memory systems. Over time, it evolved into a full ecosystem for creating sophisticated AI agents capable of reasoning and multi-step execution.

One of LangChain’s biggest strengths is its modular architecture.

Developers can combine:

- Prompt templates

- Reasoning chains

- Tool integrations

- Memory systems

- Retrieval pipelines

- Agent orchestration components

This flexibility makes LangChain especially useful for complex enterprise AI systems.

A core concept within the framework is “chains,” where multiple AI operations are linked together into structured workflows. Instead of relying on a single prompt-response interaction, chains allow agents to process information progressively through multiple reasoning stages.

LangChain also supports robust memory integration.

Agents can maintain:

- Conversational context

- Historical interactions

- Retrieved knowledge

- Workflow progress

- Long-term memory persistence

This makes the framework highly effective for building adaptive, context-aware autonomous systems.

Because of its strong developer ecosystem and extensive integrations, LangChain remains one of the leading AI frameworks for agentic AI development.

2. CrewAI

While many frameworks focus on single-agent execution, CrewAI specializes in collaborative multi-agent systems.

The framework is designed around the idea that multiple specialized agents can work together as coordinated AI teams rather than relying on one monolithic model.

For example, a CrewAI workflow may include:

- A research agent

- A planning agent

- A writing agent

- A quality-review agent

- A data-analysis agent

Each agent has a specific role, objective, and responsibility within the workflow.

This approach mirrors how human teams operate inside organizations.

One of the biggest advantages of CrewAI is structured collaboration. Agents can:

- Delegate tasks

- Share context

- Coordinate decisions

- Review outputs

- Collaborate dynamically

This creates highly scalable multi-agent orchestration systems capable of handling sophisticated workflows across business operations.

CrewAI is becoming increasingly popular for:

- Research automation

- AI content pipelines

- Business workflow automation

- Autonomous operations

- Strategic planning systems

As multi-agent architectures continue growing in importance, CrewAI represents one of the most practical frameworks for building coordinated AI ecosystems.

3. Microsoft AutoGen

Microsoft AutoGen is another major framework shaping the future of agentic AI.

Developed to support advanced conversational agent systems, AutoGen focuses heavily on communication and collaboration between AI agents, tools, and humans.

One of its defining capabilities is agent-to-agent interaction.

Instead of a single AI handling everything independently, AutoGen allows multiple agents to:

- Exchange messages

- Coordinate tasks

- Debate solutions

- Solve problems collaboratively

- Refine outputs iteratively

This creates more dynamic and intelligent execution workflows.

AutoGen is particularly valuable for enterprise environments where workflows often require multiple specialized systems working together.

For example, in a software development workflow:

- One agent may write code

- Another may test functionality

- Another may review security risks

- Another may optimize performance

The agents communicate continuously throughout the process.

Microsoft has positioned AutoGen strongly within enterprise AI automation, making it attractive for organizations building scalable operational AI systems.

As AI collaboration systems become more sophisticated, frameworks like AutoGen are helping bridge the gap between conversational AI and fully autonomous digital workforces.

4. OpenAI Agent Ecosystem

The growing OpenAI agents ecosystem is also playing a major role in the rise of agentic AI.

Modern OpenAI systems increasingly support:

- Tool usage

- Function calling

- Memory integration

- Structured outputs

- Workflow orchestration

- External system interaction

One of the most important capabilities is function calling, which allows AI models to interact with external tools and APIs in structured ways.

Instead of generating plain text instructions, the model can:

- Trigger API requests

- Execute functions

- Retrieve live data

- Access applications

- Perform operational tasks automatically

This capability is foundational for building reliable autonomous agents.

OpenAI’s ecosystem is also driving the development of AI assistants that can:

- Use browsers

- Access enterprise tools

- Analyze files

- Conduct research

- Coordinate workflows

- Perform reasoning-based execution

As these systems mature, developers are increasingly building intelligent operational layers on top of OpenAI models.

The broader trend is clear: AI frameworks are evolving from simple model interfaces into complete agent orchestration ecosystems capable of powering autonomous business operations, collaborative workflows, and next-generation intelligent systems.

Challenges and Risks of Agentic AI

While agentic AI systems offer powerful automation and reasoning capabilities, they also introduce significant technical, operational, and ethical challenges. As organizations move from simple AI assistants to autonomous decision-making systems, reliability and control become critically important.

Building intelligent agents is not only about making AI more capable — it is also about ensuring those systems remain safe, accurate, scalable, and trustworthy in real-world environments.

Hallucinations and Wrong Decisions

One of the biggest risks in agentic AI is hallucination — when an AI system generates false, misleading, or fabricated information with high confidence.

In traditional chatbots, hallucinations may simply produce incorrect answers. But in autonomous systems, the consequences can become much more serious because the AI is capable of taking actions, triggering workflows, and interacting with external systems.

For example, an autonomous agent might:

- Use incorrect data in a financial report

- Execute the wrong API call

- Misinterpret customer intent

- Trigger inaccurate workflow actions

- Generate flawed business recommendations

This creates major concerns around AI reliability.

The challenge becomes even more complex when AI agents operate through multi-step reasoning loops. A small mistake early in the workflow can cascade into larger operational failures later in the execution process.

Another issue is overconfidence.

Large language models often present uncertain information as factual, making it difficult for users and systems to distinguish between verified outputs and generated assumptions.

This is why verification layers are becoming essential in modern agentic architectures.

Organizations increasingly use:

- Human-in-the-loop validation

- Retrieval-based grounding

- External verification tools

- Reflection prompting

- Multi-agent review systems

- Confidence scoring mechanisms

These safeguards help reduce the impact of AI hallucinations while improving operational trustworthiness.

As autonomous systems become more powerful, ensuring accurate reasoning and controlled execution will remain one of the most important challenges in AI development.

Security and Privacy Concerns

Agentic AI systems introduce new cybersecurity and privacy risks because they interact directly with external tools, APIs, databases, and enterprise infrastructure.

Unlike static AI models, autonomous agents can perform actions inside operational systems. This dramatically increases the attack surface for security vulnerabilities.

For example, compromised AI agents could potentially:

- Access sensitive company data

- Trigger unauthorized workflows

- Execute harmful API calls

- Leak confidential information

- Misuse enterprise permissions

This makes AI security a top priority for organizations deploying autonomous systems.

API integrations are particularly sensitive.

Since many agents rely on external services for search, automation, payments, analytics, and communication, poorly secured APIs can expose critical infrastructure to exploitation or misuse.

Data privacy is another major concern.

Autonomous agents often process:

- Customer records

- Internal documents

- Financial data

- Healthcare information

- Proprietary business knowledge

Without strong governance policies, these systems may accidentally expose or mishandle sensitive information.

Organizations must also address permission management carefully. AI agents should not receive unrestricted access to operational systems without clear boundaries and monitoring controls.

To reduce AI privacy risks, enterprises are increasingly implementing:

- Access control systems

- Permission-based tool usage

- Encrypted memory storage

- Audit logging

- Sandboxed execution environments

- Human approval checkpoints

As AI agents gain more operational autonomy, security architecture is becoming just as important as model intelligence itself.

Cost and Scalability Challenges

Building large-scale agentic systems can be expensive and infrastructure-intensive.

Unlike simple chatbot interactions, autonomous workflows often involve:

- Multiple reasoning loops

- Continuous tool usage

- Long-context memory processing

- API calls

- Retrieval pipelines

- Multi-agent coordination

This dramatically increases computational requirements.

One major challenge is token consumption.

ReAct-style workflows repeatedly generate reasoning steps, observations, reflections, and execution decisions. Over time, these interactions can create extremely high token usage, especially for enterprise-scale deployments.

As a result, LLM costs can grow rapidly.

Organizations deploying autonomous systems at scale must manage:

- Model inference expenses

- API pricing

- Memory storage costs

- Retrieval infrastructure

- Real-time orchestration workloads

Scalability becomes another critical issue.

As more agents operate simultaneously, businesses need infrastructure capable of handling:

- Parallel processing

- Workflow coordination

- High-throughput API requests

- Vector database retrieval

- Persistent memory systems

- Monitoring and logging pipelines

This creates significant AI scalability challenges for enterprise adoption.

Latency is also a concern. Complex reasoning loops and tool orchestration can slow execution times, particularly when multiple agents interact dynamically.

To address these problems, companies are increasingly investing in:

- Smaller specialized models

- Hybrid AI architectures

- Caching systems

- Efficient orchestration layers

- Optimized retrieval pipelines

- Distributed compute infrastructure

As agentic AI adoption grows, balancing capability, reliability, and operational cost will become one of the defining challenges of next-generation AI systems.

Future of Agentic AI in 2026 and Beyond

The future of agentic AI is moving far beyond chatbots and virtual assistants. By 2026 and beyond, AI systems are expected to evolve into interconnected ecosystems of autonomous agents capable of coordinating complex operations across entire organizations.

One of the biggest trends shaping the future of AI agents is the rise of multi-agent ecosystems.

Instead of relying on a single AI model, organizations are beginning to deploy networks of specialized agents working together collaboratively. One agent may handle research, another manages planning, another performs execution, while others monitor quality, security, or compliance.

This distributed intelligence model is creating highly scalable operational AI systems.

Another major transformation is the emergence of autonomous business infrastructure.

In the coming years, many companies will integrate autonomous enterprise AI directly into core operations such as:

- Customer service

- Marketing

- Software development

- Financial analysis

- IT operations

- Supply chain management

- Internal workflow orchestration

Rather than simply assisting employees, AI systems will increasingly operate as active digital collaborators.

This is also accelerating the concept of AI employees.

These systems will not replace entire human teams overnight, but they will handle growing portions of repetitive, analytical, and operational work autonomously. Businesses may soon deploy AI agents with specialized responsibilities similar to human job roles:

- AI research analysts

- AI operations managers

- AI coding assistants

- AI sales coordinators

- AI workflow supervisors

At the same time, hyperautomation is becoming a major enterprise objective.

Traditional automation focused on repetitive tasks. Hyperautomation combines AI reasoning, orchestration, analytics, APIs, and decision-making systems into fully integrated autonomous workflows.

This shift will dramatically increase operational speed and scalability.

Another important development is real-time orchestration.

Future AI systems will continuously coordinate across cloud infrastructure, enterprise platforms, APIs, sensors, databases, and communication systems simultaneously. These real-time orchestration layers will allow businesses to respond dynamically to changing conditions with minimal manual intervention.

Despite rapid automation growth, human-AI collaboration will remain essential.

Humans will increasingly focus on:

- Strategic thinking

- Creativity

- Oversight

- Ethical decision-making

- Complex judgment

- Governance

Meanwhile, AI agents will handle execution-heavy operational workflows.

The long-term future of AI is not purely autonomous machines replacing humans. It is a collaborative ecosystem where intelligent systems amplify human productivity, accelerate innovation, and transform how organizations operate at scale.

Best Practices for Building Reliable AI Agents

As autonomous AI systems become more powerful, reliability and governance become critically important. Building capable agents is no longer enough — organizations must also ensure those systems operate safely, predictably, and responsibly.

One of the most important best practices is keeping humans in the loop.

Even highly advanced agents can make mistakes, hallucinate information, or take unintended actions. Human oversight provides an additional safety layer for:

- High-risk decisions

- Financial operations

- Legal workflows

- Sensitive customer interactions

- Security-related tasks

Human review systems help improve accountability and reduce operational risks.

Another critical principle is limiting tool permissions.

AI agents should only receive access to the tools and systems necessary for their specific tasks. Restricting permissions minimizes the risk of:

- Unauthorized actions

- Data exposure

- Workflow misuse

- Security vulnerabilities

This principle is becoming central to modern AI governance strategies.

Evaluation systems are equally important.

Reliable agents should continuously monitor:

- Output quality

- Accuracy

- Task completion

- Tool usage

- Error rates

- Reasoning consistency

Organizations increasingly use automated testing pipelines and validation frameworks to improve trustworthiness in reliable AI systems.

Memory management also requires careful design.

While persistent memory improves personalization and long-term reasoning, excessive memory retention can create privacy, security, and context-management problems. Developers should store only relevant information and implement clear retention policies.

Monitoring agent behavior is another essential practice.

Autonomous systems should include:

- Logging systems

- Audit trails

- Performance monitoring

- Execution tracking

- Alert systems

These monitoring layers help organizations detect abnormal behavior early.

Finally, reliable agentic systems should always include fallback mechanisms.

If the AI encounters uncertainty, missing information, tool failures, or conflicting outputs, the workflow should:

- Escalate to a human

- Trigger verification steps

- Switch to safer execution modes

- Pause operations automatically

These safeguards are essential for building trustworthy and safe AI agents capable of operating in real-world business environments.

As agentic AI adoption accelerates, organizations that prioritize governance, reliability, and operational safety will be best positioned to scale autonomous systems successfully.

Future of Agentic AI in 2026 and Beyond

The future of agentic AI is moving rapidly toward fully connected ecosystems of intelligent, autonomous systems capable of operating across entire organizations. By 2026, AI will no longer function only as a chatbot or productivity assistant — it will increasingly become an active operational layer inside businesses.

One of the biggest developments shaping the future of AI agents is the rise of multi-agent ecosystems.

Instead of relying on a single AI model to handle everything, organizations are beginning to deploy specialized AI agents that collaborate together like digital teams. For example:

- Research agents gather information

- Planning agents coordinate workflows

- Analysis agents evaluate data

- Execution agents perform operational tasks

- Monitoring agents oversee quality and security

These systems communicate continuously, share context, and coordinate decisions dynamically.

This evolution is laying the foundation for autonomous businesses.

In the coming years, companies will integrate autonomous enterprise AI into core business operations such as:

- Customer support

- Marketing automation

- Financial analysis

- IT infrastructure management

- Software development

- Supply chain optimization

- Sales operations

Rather than simply assisting employees, AI systems will actively execute operational workflows with minimal supervision.

Another major shift is the emergence of AI employees.

Organizations are increasingly experimenting with AI agents assigned to specific operational roles. These digital workers may function as:

- AI researchers

- AI coding assistants

- AI marketing coordinators

- AI operations analysts

- AI workflow managers

Unlike static automation tools, these agents can reason, adapt, and improve over time.

Conclusion

Agentic AI is rapidly transforming artificial intelligence from passive assistance into autonomous execution. Instead of simply responding to prompts, modern AI systems can now reason, plan, use tools, remember context, and complete multi-step workflows independently. This shift is creating a new generation of intelligent systems capable of operating across research, software development, customer support, operations, and enterprise automation.

At the center of this transformation is the ReAct framework 2026 ecosystem. By combining reasoning and action into continuous feedback loops, ReAct enables AI agents to think dynamically, interact with external systems, evaluate outcomes, and refine decisions in real time. This architecture has become one of the foundational building blocks behind modern autonomous AI agents and scalable agentic workflows.

FAQ Section

1. What is Agentic AI?

Agentic AI refers to artificial intelligence systems capable of autonomous decision-making, reasoning, planning, and action execution. Unlike traditional AI models that only generate responses, agentic systems can pursue goals independently, interact with tools, and complete multi-step workflows with minimal human supervision.

2. What is the ReAct framework in AI?

The ReAct framework stands for “Reason + Act.” It is an AI reasoning architecture that combines logical reasoning with actionable execution. Instead of only generating answers, ReAct-based systems continuously reason, perform actions, observe outcomes, and refine decisions dynamically.

The framework is widely used in autonomous AI agents and modern agentic workflows.

3. How do autonomous AI agents work?

Autonomous AI agents operate through continuous reasoning-action loops. Typically, they:

- Receive a goal

- Analyze the task

- Plan actions

- Use tools or APIs

- Evaluate outputs

- Repeat the process until completion

These systems often combine memory, planning, reasoning, and external tool usage to complete complex workflows.

4. What are agentic workflows?

Agentic workflows are AI-driven operational processes where autonomous agents execute multi-step tasks dynamically using reasoning and decision-making capabilities.

Unlike traditional automation, agentic workflows can adapt to changing conditions, revise plans, and interact with multiple tools or systems in real time.

5. Is ReAct better than Chain-of-Thought prompting?

ReAct and Chain-of-Thought (CoT) prompting serve different purposes.

Chain-of-Thought prompting improves internal reasoning by encouraging step-by-step thinking. ReAct extends this concept by combining reasoning with real-world actions such as web searches, API calls, tool usage, and workflow execution.

For autonomous AI systems, ReAct is generally more powerful because it supports dynamic interaction and adaptive execution.

6. What tools are used to build AI agents?

Modern AI agents are commonly built using:

- Large Language Models (LLMs)

- APIs

- Vector databases

- Memory systems

- Workflow orchestration tools

- Agent frameworks

Popular platforms include: