Summary: Manual prompting had its time, but it is no longer enough for modern AI systems. It is fragile, hard to scale, and difficult to maintain. DSPy represents a major shift in how we work with AI. It turns prompting into a structured, programmable process that is reliable and efficient. The key takeaway is simple — treat prompts like code, not text. When you do this, you unlock the full potential of AI systems and build solutions that are future-ready!

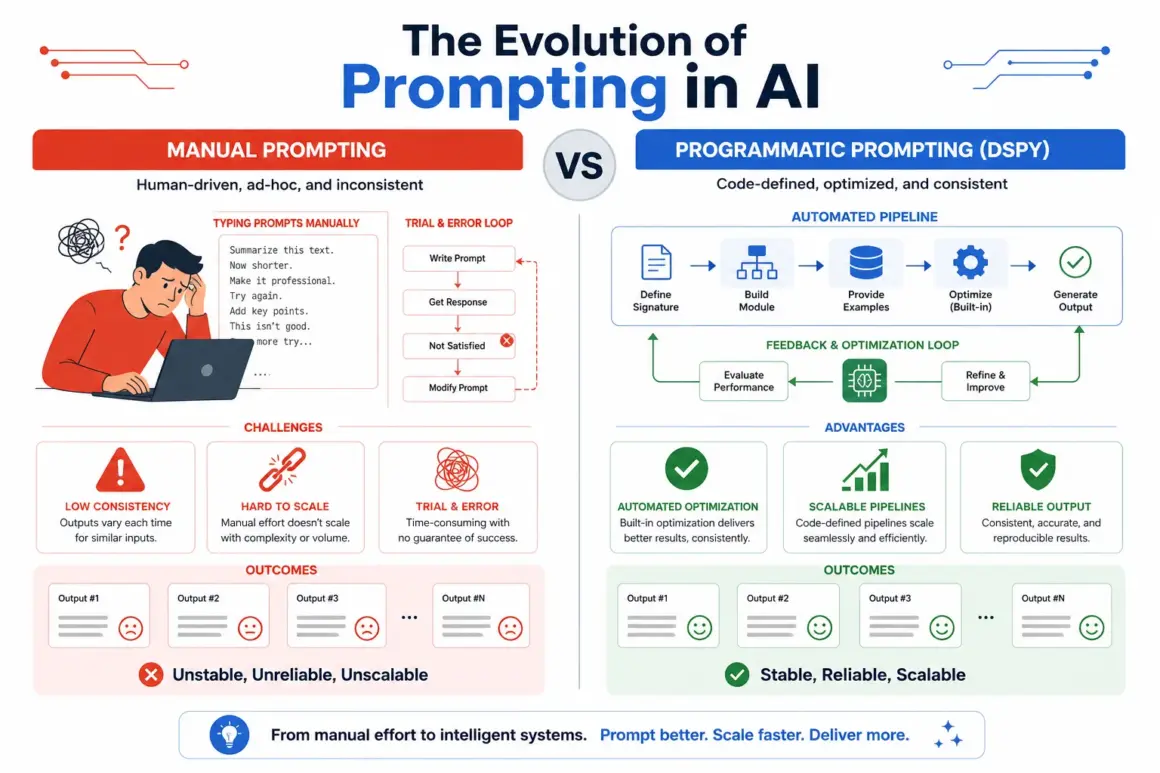

Prompt engineering once felt like a superpower in AI. People spent hours crafting the “perfect prompt” to get better outputs. But here’s the truth — this manual approach is slowly becoming outdated! As AI systems grow, relying only on handcrafted prompts is no longer practical.

The biggest problem with manual prompting is fragility. A small change in wording can completely change the output. This makes results inconsistent and hard to trust, especially when building real-world applications. It also becomes difficult to maintain quality across different use cases.

Scaling is another major challenge. Writing prompts one by one is slow and inefficient. When businesses need hundreds or thousands of prompts, manual work simply cannot keep up. This creates bottlenecks and limits the true potential of AI systems.

That’s where programmatic or declarative prompting comes in. Instead of writing prompts manually, you define the logic in code. This allows prompts to be generated, tested, and improved automatically. It brings structure, consistency, and speed to AI workflows.

One of the most powerful tools leading this shift is DSPy. It helps developers move from guessing prompts to building optimized prompt pipelines. With DSPy, you can treat prompting like software development — structured, testable, and scalable.

In this blog, you will learn how to move beyond manual prompting. We will explore automated prompt tuning, optimization techniques, and how to build efficient prompt pipelines. By the end, you’ll understand how to create smarter and more reliable AI systems without endless trial and error!

What Problem Does DSPy Solve?

To understand the value of DSPy, think about how programming has evolved over time. In the early days, developers had to write machine-level instructions manually. This gave them full control, but it was extremely hard to manage and maintain. Later, high-level programming languages changed everything by allowing developers to describe what they wanted, while compilers handled the complex details.

DSPy brings a similar transformation to AI prompting. Instead of manually writing and adjusting prompt text, you simply define a structured task. This is done using a signature, which clearly describes what input the system receives and what output it should generate. DSPy then automatically converts this into an effective prompt behind the scenes.

This approach removes the burden of constantly rewriting prompts. You no longer need to worry about small wording changes or model updates breaking your system. By focusing on the task definition instead of the prompt itself, your AI workflows become more stable and easier to manage.

Another powerful advantage of DSPy is optimization. Since prompts are generated programmatically rather than written manually, they can be improved automatically. You can provide sample data, set evaluation metrics, and let DSPy discover better prompt strategies on its own.

This ability to automate and optimize is what makes DSPy truly different. It is not just a tool for prompting — it is a complete system that turns prompting into a scalable and efficient engineering process.

Manual vs Programmatic Prompting

| Aspect | Manual Prompting | Programmatic Prompting (DSPy) |

|---|---|---|

| Consistency | Low | High |

| Scalability | Limited | Easily scalable |

| Optimization | Trial and error | Automated tuning |

| Maintenance | Difficult | Structured and manageable |

| Reliability | Unstable outputs | More predictable results |

What is Declarative Prompting?

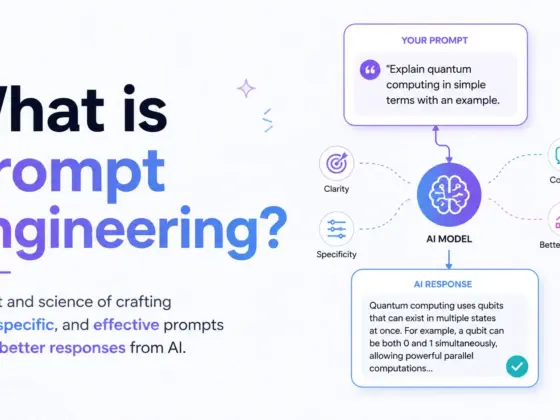

Declarative prompting is a new way of working with AI where you focus on the desired outcome instead of the exact wording. Instead of telling the model “what to say,” you define what result you want to achieve. This makes AI systems smarter, more flexible, and easier to manage.

In simple terms, declarative prompting shifts your mindset. You don’t manually write every prompt anymore. Instead, you describe the task, constraints, and goals — and the system handles the rest. This approach reduces guesswork and improves consistency across outputs.

To understand it better, let’s compare it with traditional prompting methods. Manual or imperative prompting requires direct instructions for every situation. Declarative prompting, on the other hand, focuses on high-level intent and lets the system optimize how to get there.

Imperative vs Declarative Prompting

| Feature | Imperative Prompting (Manual) | Declarative Prompting |

|---|---|---|

| Approach | Write exact prompts | Define desired outcome |

| Flexibility | Low | High |

| Reusability | Limited | Strong |

| Maintenance | Hard to update | Easy to manage |

| Optimization | Manual trial and error | Automated |

One of the biggest advantages of declarative prompting is reusability. You can create prompt templates or modules and use them across multiple tasks. This saves time and ensures consistent results without rewriting everything again and again.

It also improves maintainability. When your AI system grows, updating a single declarative rule is much easier than editing dozens of individual prompts. This makes your workflow cleaner and more organized, just like modern software systems.

Another major benefit is scalability. Declarative prompting allows you to handle large and complex AI applications with ease. Whether you are building chatbots, automation tools, or content systems, this method supports growth without breaking performance.

This approach strongly aligns with modern software engineering practices. Concepts like abstraction, modularity, and automation are at its core. Tools like DSPy bring this idea to life by enabling prompt abstraction and turning AI prompting into a structured programming paradigm.

The Problem with Manual Prompt Engineering

Manual prompt engineering may look simple at first, but it comes with serious limitations. As AI systems grow, these problems become more visible and harder to manage. Let’s break down the key issues that make manual prompting inefficient and outdated.

Fragility of Prompts

One of the biggest problems is how fragile prompts are. Even a small change in wording can produce completely different outputs. This makes it difficult to rely on prompts for consistent results, especially in real-world applications.

In production systems, this lack of stability becomes risky. You cannot guarantee the same quality every time. This unpredictability can break workflows and reduce trust in AI systems.

Lack of Optimization

Manual prompting does not offer a proper way to improve performance. Most developers rely on guesswork and repeated testing. This trial-and-error approach is slow and often frustrating.

There is no built-in system to measure or optimize prompt quality. As a result, improvements are inconsistent and not scalable. This limits the ability to build high-performing AI applications.

Poor Scalability

Managing a few prompts is easy, but handling hundreds is not. As applications grow, prompt management becomes messy and confusing. Keeping track of different versions and updates becomes a major challenge.

Testing and maintaining prompts across multiple use cases is also difficult. Without a structured system, scaling AI solutions becomes inefficient and error-prone.

Hidden Costs

Manual prompting may seem cost-effective, but it has hidden expenses. Writing, testing, and refining prompts takes a lot of time. This slows down development and reduces productivity.

There is also a high maintenance overhead. Every update or change requires manual effort, which increases long-term costs. Tools like DSPy help solve these issues by automating and optimizing prompt workflows.

Summary of Challenges

| Problem Area | Key Issue | Impact on AI Systems |

|---|---|---|

| Fragility | Small changes break outputs | Low reliability |

| Optimization | No systematic improvement | Poor performance |

| Scalability | Hard to manage many prompts | Limited growth |

| Hidden Costs | Time and maintenance overhead | Increased expenses |

These challenges clearly show why manual prompt engineering is not sustainable. Moving toward declarative and programmatic approaches is the smarter and future-ready solution!

Introducing DSPy: The Framework for Programmatic Prompting

As AI systems evolve, developers need better tools than manual prompting. This is where DSPy comes into play! It is a modern framework designed to make prompting more structured, scalable, and automated.

DSPy was developed within the ecosystem of Stanford University. It focuses on turning prompts into programmable components rather than plain text. This shift allows developers to build smarter AI systems with less manual effort.

The core philosophy of DSPy is simple but powerful. Instead of writing prompt strings again and again, you create modular programs. These programs define what the AI should do, and DSPy handles how it should be done.

Key Concepts of DSPy

| Concept | Description |

|---|---|

| Signatures | Define input and output behavior of AI tasks |

| Modules | Reusable components powered by language models |

| Optimizers | Automatically improve prompts and performance |

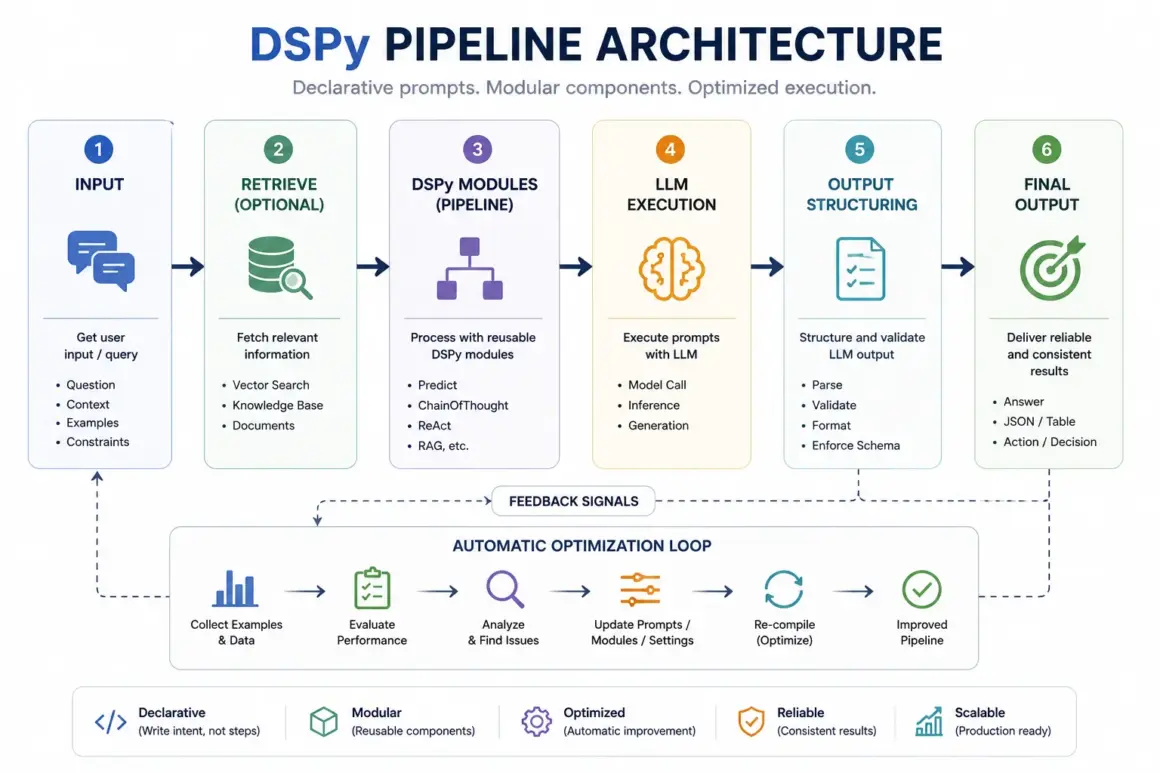

How DSPy Works (Architecture Breakdown)

To truly understand DSPy, you need to look at how its architecture is designed. It breaks down AI workflows into simple, reusable components that work together efficiently.

Signatures: Defining Intent

Signatures are the foundation of DSPy. They define the input and output structure of a task. Instead of writing prompts, you describe what goes in and what should come out.

For example, in a question-answering system, the input could be a question, and the output would be a precise answer. Similarly, for summarization, the input is a long text, and the output is a short summary.

This approach removes confusion and ensures clarity. It also makes your AI system more predictable and easier to manage.

Modules: Building Blocks

Modules are the core building blocks of DSPy. These are reusable components that perform specific tasks using language models. You can combine multiple modules to create complex AI systems.

Some common module types include predictors, chains, and ReAct-style reasoning modules. Each module handles a part of the task, making the system more organized and flexible.

The best part is composability! You can connect modules like building blocks to create powerful workflows. This makes DSPy perfect for creating scalable and maintainable AI applications.

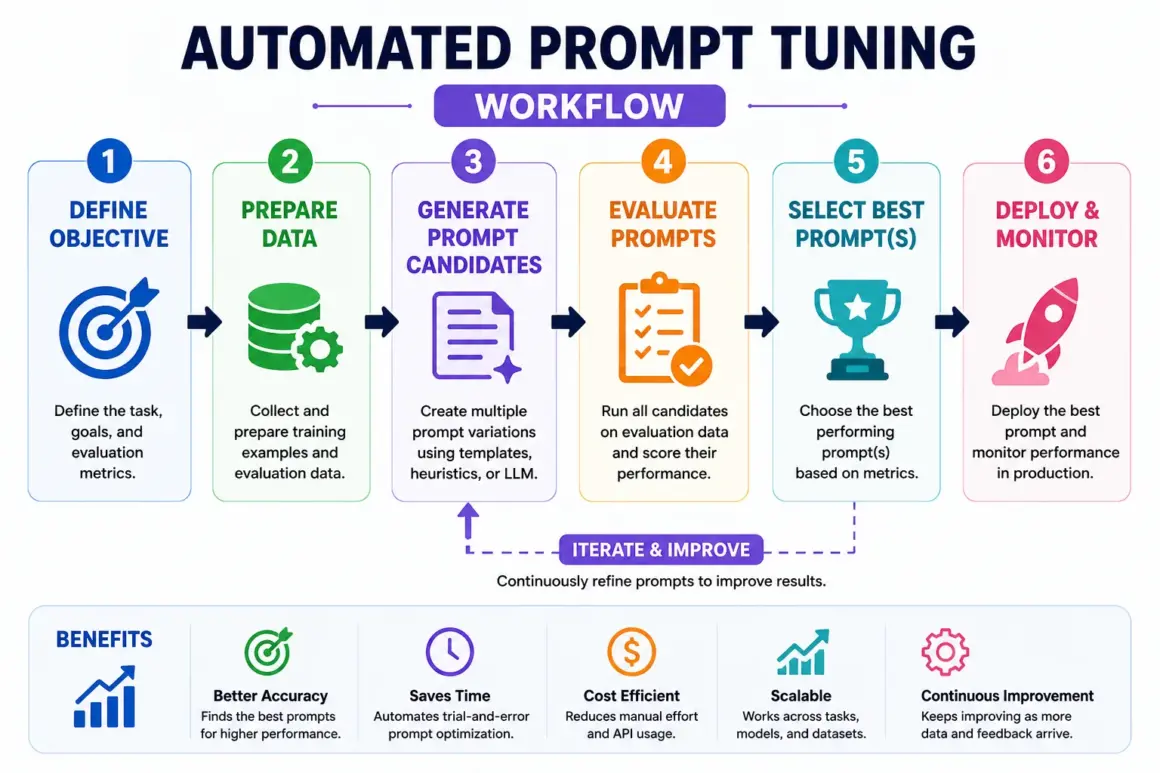

Optimizers: Automated Prompt Tuning

One of the most powerful features of DSPy is its optimizers. These components automatically improve prompts without manual effort. This is called automated prompt tuning.

Instead of guessing what works, DSPy uses training data to refine prompts. It tests different variations and selects the best-performing ones. This results in higher accuracy and better outputs.

This process saves time and removes the need for trial-and-error. It also ensures consistent performance across different tasks.

Prompt Pipelines

DSPy allows you to build prompt pipelines, which are multi-step workflows. Instead of a single prompt, you can chain multiple steps together to solve complex problems.

For example, one module can retrieve information, another can process it, and a third can generate the final output. This creates a smooth and logical flow of tasks.

Prompt pipelines are especially useful for advanced applications like research assistants, chatbots, and automation tools. They help break down complex reasoning into manageable steps.

Declarative vs Traditional Prompting

When comparing manual prompting with declarative prompting, the difference is clear. Traditional methods rely heavily on writing and tweaking prompt strings. In contrast, declarative prompting with DSPy uses structured code to define tasks and automate improvements.

This shift makes AI systems more reliable, scalable, and easier to manage. Instead of guessing what works, developers can build optimized systems that improve over time.

| Aspect | Manual Prompting | DSPy Declarative Prompting |

|---|---|---|

| Approach | String-based prompts | Code-based structure |

| Optimization | Manual trial and error | Automated prompt tuning |

| Scalability | Low | High |

| Maintainability | Poor | Strong |

| Testing | Difficult | Systematic and measurable |

With declarative prompting, you move from fragile experimentation to a more engineering-driven approach. This is why it is becoming the preferred AI programming paradigm for modern applications.

Real-World Use Cases of DSPy

The real power of DSPy can be seen in practical applications. It helps developers build systems that are not only smart but also consistent and scalable. Let’s explore some key use cases.

Retrieval-Augmented Generation (RAG)

In Retrieval-Augmented Generation systems, DSPy enables structured prompt pipelines. These pipelines retrieve relevant data and then generate accurate responses based on that information.

This improves answer quality and reduces hallucinations. It is especially useful for search engines, knowledge bases, and research tools.

Chatbots & AI Assistants

Chatbots built with DSPy are more consistent and reliable. Instead of unpredictable responses, they follow structured logic defined through modules and signatures.

It also becomes easier to update and improve these systems. Developers can make changes at the program level without rewriting multiple prompts.

Content Generation Systems

DSPy is highly effective for content workflows like blog writing and summarization. You can create pipelines where one module generates ideas, another writes content, and another refines it.

This makes content generation faster and more organized. It is ideal for SEO teams, marketers, and automated publishing systems.

Enterprise AI Systems

Large organizations need AI systems that are reliable at scale. DSPy provides structured testing, evaluation, and optimization features that make this possible.

It helps enterprises manage complex workflows, maintain consistency, and ensure high-quality outputs across different applications. This makes it a powerful tool for production-level AI systems.

Why These Use Cases Matter

| Use Case | Key Benefit | Outcome |

|---|---|---|

| RAG Systems | Accurate information retrieval | Better answers |

| Chatbots | Consistent responses | Improved user experience |

| Content Generation | Automated workflows | Faster production |

| Enterprise Systems | Scalable and reliable AI | Business efficiency |

These examples clearly show how DSPy is transforming AI development. It replaces manual effort with automated prompt tuning and structured prompt pipelines, making systems smarter and future-ready!

Building Your First DSPy Program (Step-by-Step)

Getting started with DSPy is easier than it looks! You don’t need to be an expert — just basic knowledge of Python is enough. Let’s walk through a simple step-by-step process.

Step 1: Setup & Installation

First, you need a Python environment on your system. You can use tools like virtual environments to keep your setup clean and organized.

After that, install DSPy using a package manager like pip. Once installed, you are ready to start building AI programs using declarative prompting.

Step 2: Define a Signature

A signature defines what your AI system should do. For example, you can create a simple Question → Answer structure.

This tells DSPy what input it will receive and what output it should generate. It removes the need to manually design prompts for every case.

Step 3: Create a Module

Next, you build a module that uses the signature. This module acts as a functional unit that performs the task.

You can think of it like a small program that processes input and produces output. Modules can also be reused in different projects.

Step 4: Add Optimization

Now comes the powerful part — optimization! DSPy allows you to train your module using example data.

It automatically adjusts prompts to improve performance. This is where DSPy optimization replaces manual trial-and-error.

Step 5: Evaluate Results

Finally, you evaluate how well your system performs. You can use metrics like accuracy, relevance, and consistency.

Based on results, DSPy continues to improve outputs. This creates a feedback loop for better AI performance over time.

Automated Prompt Tuning Explained

Automated prompt tuning is the process of improving prompts without manual editing. Instead of guessing what works, the system learns from data and optimizes itself.

With DSPy, this process becomes fully automatic. It tests different prompt variations and selects the best-performing ones based on results.

This removes the need for endless trial-and-error. Developers no longer need to tweak prompts manually again and again.

Key Benefits of Automated Prompt Tuning

| Benefit | Impact on AI Systems |

|---|---|

| Better Accuracy | More precise outputs |

| Reduced Hallucination | Fewer incorrect responses |

| Consistent Results | Stable performance |

This is why automated prompt tuning is a game-changer in modern AI development!

Prompt Pipelines: Scaling AI Systems

As AI systems grow, a single prompt is not enough. You need structured workflows to handle complex tasks. This is where prompt pipelines come in.

A pipeline is a sequence of steps where each module performs a specific function. These steps work together to solve larger problems.

For example, a typical pipeline may look like this:

Retrieve → Analyze → Generate → Refine

First, the system retrieves data. Then it analyzes the information, generates a response, and finally refines the output for quality.

This multi-step reasoning approach makes AI systems more powerful and reliable. It also allows easy scaling for advanced applications.

DSPy vs Other Prompting Approaches

Let’s compare DSPy with other popular prompting techniques to understand its true value.

Comparison Table

| Approach | Method | Limitation |

|---|---|---|

| Traditional Prompting | Manual input writing | Fragile and hard to scale |

| Few-Shot Prompting | Provide examples | Limited flexibility |

| Chain-of-Thought Prompting | Step-by-step reasoning | Still manual and complex |

| DSPy | Programmatic prompting | Structured and scalable |

Unlike traditional methods, DSPy brings a software engineering mindset to AI. It uses modular design, automation, and systematic testing to build better systems.

This is why DSPy is often called “software engineering for AI”. It transforms prompting from an art into a structured, reliable, and scalable process.

Challenges & Limitations

While DSPy is powerful, it is not perfect. Like any advanced technology, it comes with its own challenges that developers should understand before using it.

One major challenge is the learning curve. Developers who are used to simple prompt writing may find it difficult at first. DSPy requires understanding of programming concepts, which can take time to learn.

Another limitation is its dependency on datasets. For optimization to work well, you need good quality training data. Without proper data, automated prompt tuning may not give the best results.

Also, DSPy is not always necessary for simple tasks. If your use case is small or straightforward, manual prompting might still be enough. Using DSPy in such cases can add unnecessary complexity.

Future of AI Development: Prompting as Programming

The future of AI is clearly moving toward programming-based approaches. Instead of focusing only on writing prompts, developers are now designing complete AI systems.

This shift includes building declarative AI pipelines, where tasks are defined at a high level and executed automatically. It makes AI development more structured and efficient.

Frameworks like DSPy are leading this transformation. They bring software engineering principles into AI, making systems more reliable and scalable.

As a result, the role of prompt engineers is evolving. Instead of just writing prompts, they are becoming AI system engineers who design, optimize, and manage entire AI workflows.

Best Practices for Using DSPy

- To get the best results from DSPy, it is important to follow some proven best practices.

- Start with clear and well-defined signatures. This ensures your system understands the task properly and produces accurate outputs.

- Always use high-quality training data for optimization. Good data leads to better performance and more reliable results.

- Continuously evaluate your outputs. Regular testing helps identify issues early and improves system performance over time.

- You should also combine DSPy with Retrieval-Augmented Generation systems. This improves accuracy by grounding responses in real data and reduces hallucinations.

FAQs

What is declarative prompting?

Declarative prompting is an approach where you define the desired outcome instead of writing exact prompts. It focuses on what you want rather than how to say it, making AI systems more flexible and scalable.

How does DSPy optimize prompts?

DSPy uses automated prompt tuning to improve performance. It tests different prompt variations using training data and selects the best ones based on results.

Is DSPy better than prompt engineering?

DSPy is not just better — it is more advanced. While traditional prompt engineering relies on manual effort, DSPy provides automation, scalability, and structured workflows.

Can beginners use DSPy?

Yes, beginners can use DSPy, but they may need some time to learn the basics. Understanding Python and simple programming concepts will make it much easier to get started.

What are prompt pipelines?

Prompt pipelines are multi-step workflows where different modules handle different tasks. For example: retrieve data, analyze it, generate output, and refine results. This makes AI systems more powerful and organized.

Sources:

DSPy Introduction Paper / Research

https://arxiv.org/abs/2310.03714

DSPy GitHub Repository

https://github.com/stanfordnlp/dspy

DSPy Documentation (Stanford NLP)

https://stanfordnlp.github.io/dspy/

Research & Academic Sources

Prompt Programming & LLM Research (General Overview)

https://arxiv.org/list/cs.CL/recent

Stanford University NLP Research Page

https://nlp.stanford.edu/

Prompt Engineering & AI Concepts

Chain-of-Thought Prompting Paper

https://arxiv.org/abs/2201.11903

OpenAI Prompt Engineering Guide

https://platform.openai.com/docs/guides/prompt-engineering

Retrieval-Augmented Generation Explained

https://www.pinecone.io/learn/retrieval-augmented-generation/