The story of artificial intelligence (AI) is not a simple, linear path of progress. Instead, it is a singular narrative in the history of science, defined by volatile cycles of groundbreaking theoretical breakthroughs, periods of industrial euphoria, and deep systemic disillusionment. For millennia, humans have dreamed of externalizing the mechanisms of thought, moving from ancient myths of bronze guardians to the multi-layered neural architectures that power our smartphones today.

In 2026, artificial intelligence has transitioned from a specialized academic discipline into an ubiquitous, “agentic” infrastructure. To truly understand where we are going—and whether a “third AI winter” lurks on the horizon—we must first understand how we arrived at this moment.

What is Artificial Intelligence?

Artificial Intelligence is a broad specialty within computer science concerned with creating systems capable of replicating human intelligence and problem-solving abilities. Unlike traditional computer programs that require manual debugging to improve, AI systems process vast amounts of data to learn from the past and streamline future actions.

Today, the field is generally categorized into several layers:

- Artificial Intelligence (AI): The umbrella term for machines performing tasks associated with human intelligence.

- Machine Learning (ML): A subset of AI where systems learn patterns from data rather than relying solely on hand-coded rules.

- Deep Learning: A further subset of ML that uses multi-layered artificial neural networks to learn highly complex representations.

- Generative AI: A modern category of AI that creates new content—text, images, audio, or video—by learning the underlying distribution of its training data.

Ancient Dreams and Mechanical Foundations (Pre-1950)

The conceptual roots of AI precede the computer by thousands of years. Ancient Greek mythology introduced archetypes of intelligent automata, such as Talos, a bronze guardian, and Galatea, a statue brought to life. By 400 BCE, records mention a mechanical pigeon capable of flight, and by 1495, Leonardo da Vinci had designed a mechanical knight.

The transition toward mathematical logic began in the 13th century with Ramon Llull, who invented the Ars Magna, a tool intended to combine concepts mechanically to produce new knowledge. In the 17th century, Gottfried Wilhelm Leibniz envisioned a universal symbolic language where disputes could be resolved simply by saying, “Let us calculate”.

By the 19th century, Charles Babbage and Ada Lovelace conceptualized the Analytical Engine. Lovelace famously recognized that such a machine could process more than just numbers—it could theoretically compose music or scientific insights, establishing the first vision of general-purpose AI.

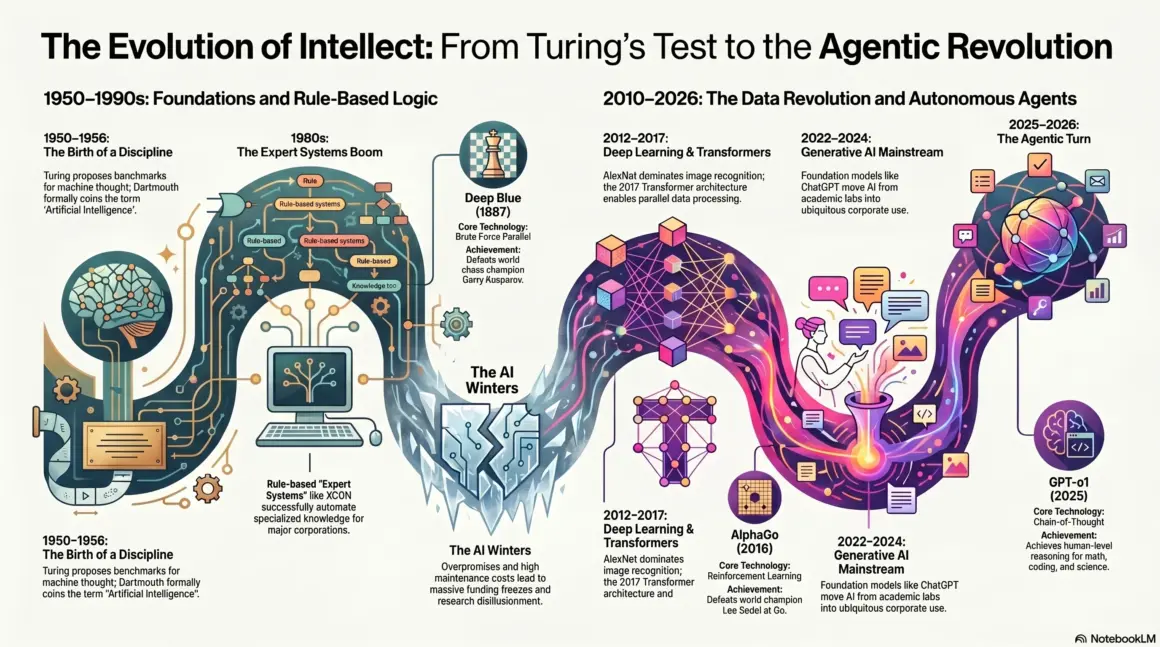

The Birth of a Discipline: The 1950s

The 1950s marked the era when AI became a formal science. Three pivotal events defined this decade:

1. Alan Turing and the “Imitation Game”

In 1950, British mathematician Alan Turing published his landmark paper, “Computing Machinery and Intelligence”. He posed the question, “Can machines think?” and proposed the Turing Test. He argued that if a human interrogator could not distinguish a machine’s responses from a human’s during a conversation, the machine should be considered “thinking”.

2. The Dartmouth Workshop (1956)

The term “Artificial Intelligence” was officially coined in 1955 by John McCarthy for a summer workshop at Dartmouth College in 1956. Attendees included future legends like Marvin Minsky, Claude Shannon, and Herbert Simon. They operated under the conjecture that “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it”.

3. Early Machine Learning

During this time, Arthur Samuel developed a checkers-playing program that could learn from experience. In 1959, he coined the term “machine learning” while demonstrating that a computer could eventually play the game better than the person who programmed it.

The Golden Age of Symbolic AI (1960s–1970s)

Following Dartmouth, the field entered a period of rapid development known as the “Golden Era”. Most research focused on Symbolic AI (also called “GOFAI” or Good Old-Fashioned AI), which used logic and human-readable rules to solve problems.

- Logic Theorist (1956): The first program deliberately engineered to perform automated reasoning, proving 38 theorems from the Principia Mathematica.

- ELIZA (1966): Created by Joseph Weizenbaum, ELIZA was the first chatbot. It used pattern-matching to mimic a psychotherapist.

- Shakey the Robot (1966–1972): The first mobile robot capable of reasoning about its own actions and navigating physical environments.

- The Perceptron (1958): Frank Rosenblatt built the first working implementation of a neural network. However, its limitations were famously critiqued by Minsky and Papert in 1969, which temporarily halted most neural network research.

The Chilling Silence: The AI Winters

The history of AI is haunted by two major “winters”—periods where funding and interest collapsed because the technology failed to live up to its own hype.

The First AI Winter (1974–1980)

Triggered by the Lighthill Report in the UK and DARPA’s frustration in the US, this period began when researchers realized that simple logic could not solve “real-world” problems due to combinatorial explosion. Funding for general research was slashed, and many labs closed.

The Second AI Winter (1987–1993)

This winter followed a brief boom in Expert Systems (programs that used “if-then” rules to mimic specialists). These systems proved too brittle and expensive to maintain. Simultaneously, the market for specialized hardware like Lisp machines collapsed when general-purpose PCs became powerful enough to run similar software.

The Brute Force Triumph and the Statistical Turn (1994–2011)

As AI emerged from its second winter, it moved away from grand promises and toward specific, data-driven solutions.

- Deep Blue (1997): IBM’s chess-playing computer defeated world champion Garry Kasparov. It didn’t “think” like a human; it used sheer brute force, evaluating 200 million moves per second.

- Kismet (2000): A “social robot” at MIT capable of recognizing and mimicking human emotions through facial expressions.

- IBM Watson (2011): In a landmark for Natural Language Processing (NLP), Watson defeated the greatest human champions on the quiz show Jeopardy!. It analyzed 15 terabytes of unstructured data to answer complex puns and wordplay.

The Deep Learning Revolution (2012–2017)

The modern era began in earnest with the convergence of three factors: big data, powerful GPUs, and refined algorithms.

The ImageNet Moment (2012)

In 2012, a Convolutional Neural Network (CNN) called AlexNet won the ImageNet Large Scale Visual Recognition Challenge by a dominant margin. This proved that deep learning was not a “pipedream” but a practical tool for computer vision. Over the next few years, almost all other approaches to image recognition were abandoned in favor of deep learning.

AlphaGo (2016)

Developed by Google DeepMind, AlphaGo defeated one of the world’s top Go players, Lee Sedol. Because Go is “a googol times more complex than chess,” this was considered a grand challenge for AI. AlphaGo used reinforcement learning, training by playing millions of games against itself.

The Transformer Revolution and Generative AI (2018–2023)

In 2017, Google researchers published “Attention Is All You Need,” introducing the Transformer architecture. Unlike previous models that processed text word-by-word, Transformers used “attention mechanisms” to process entire sequences in parallel.

This led to the creation of Large Language Models (LLMs):

- GPT-3 (2020): A model with 175 billion parameters capable of generating human-like text and coding without specific training for those tasks.

- ChatGPT (2022): Released by OpenAI, it reached 100 million users in just two months, bringing conversational AI into the mainstream.

- GPT-4 (2023): A multimodal model capable of processing both text and images, passing professional exams like the bar exam with high scores.

The Frontier: Agentic AI and Governance (2024–2026)

By early 2026, the landscape has shifted from generative tools to Autonomous Agentic AI.

Key Developments in 2025–2026:

- Reasoning Models: OpenAI’s “o-series” (formerly Strawberry) introduced models with “built-in thinking,” capable of multi-step logical reasoning and complex math.

- Multimodal Dominance: Models like GPT-4o and Gemini 1.5 process text, audio, and visual data simultaneously in real-time.

- Task Execution (Agents): AI is evolving from answering questions to taking actions—managing business workflows and interacting with operating systems as “personal daemons”.

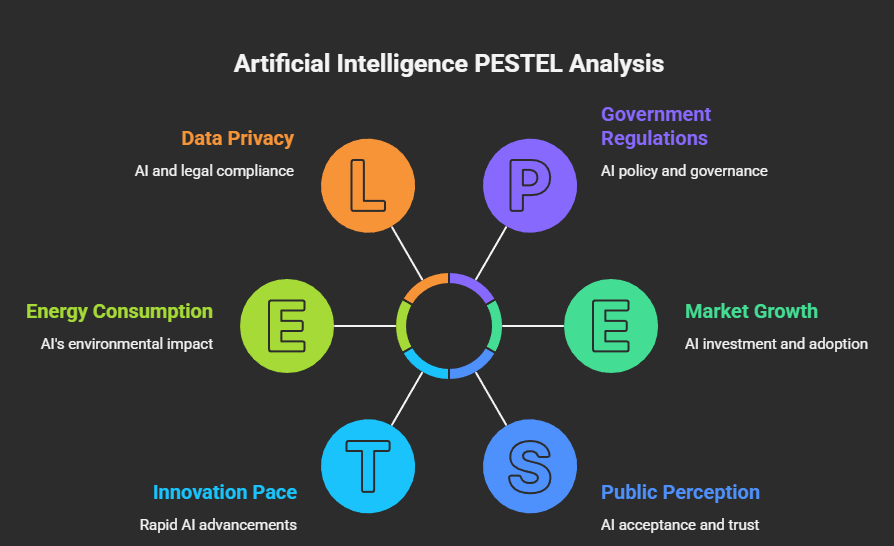

- Governance: The passing of the EU AI Act in 2024 set the tone for global regulation, categorizing AI systems by risk level and requiring transparency for outputs.

Key Comparisons: Symbolic AI vs. Connectionism

The history of AI is essentially a struggle between two philosophies:

| Feature | Symbolic AI (Top-Down) | Connectionism/Machine Learning (Bottom-Up) |

|---|---|---|

| Method | Logic, rules, and human-readable symbols. | Neural networks mimicking brain-like structures. |

| Strengths | Transparent, interpretable, and good at structured logic. | Flexible, handles messy data, excels at perception (vision/audio). |

| Weaknesses | “Brittle”—fails when rules don’t cover a situation. | “Opaque”—hard to explain why a decision was made (black box). |

| Era | 1950s–1980s. | 2012–Present. |

Current research is moving toward Neuro-symbolic AI, which attempts to integrate both: using neural networks for perception (System 1) and symbolic logic for planning and deduction (System 2).

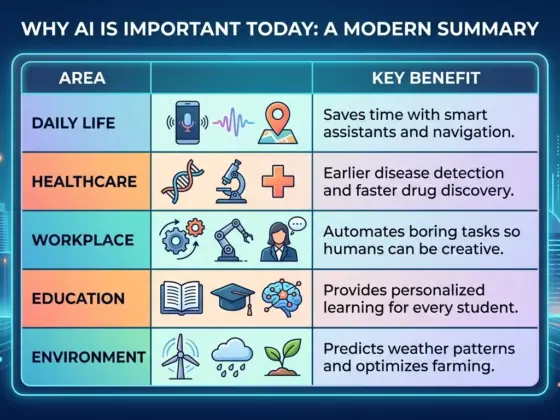

Advantages and Real-World Applications

AI’s impact is no longer theoretical; it is foundational infrastructure, comparable to electricity.

Core Advantages:

- Efficiency: Processing data at superhuman speeds (e.g., Deep Blue reviewing 200 million moves/sec).

- Scalability: Automating expert knowledge across global operations (e.g., XCON saving millions for DEC).

- Discovery: Solving problems humans couldn’t, such as AlphaFold predicting the structure of all of life’s molecules.

Modern Applications:

- Healthcare: AI-driven diagnostic tools and drug discovery.

- Logistics: Strategic planning and autonomous supply chain management.

- Creativity: Photorealistic video generation (e.g., Sora) and cinematic audio.

- Business: Real-time customer service agents and automated coding.

Practical Insights for the Future

As an educator, I often see organizations treat AI as a shortcut. However, history teaches us that overpromising and expecting immediate ROI is the fastest way to disappointment.

- AI Literacy is Mandatory: Every employee needs to understand what AI can and cannot do in their specific context.

- Focus on Outcomes, Not Tools: Don’t buy a platform before defining a clear business problem it needs to solve.

- Governance is a Priority: Treat AI trust as a requirement. If you can’t explain or monitor it, you shouldn’t safely scale it.

Frequently Asked Questions (FAQs)

Was AI invented in 1956?

While the term was coined at the Dartmouth workshop in 1956, foundational ideas in logic and mechanical computation existed for centuries, and Alan Turing’s seminal work was published in 1950.

What caused the AI winters?

Winters occurred when the technology could not deliver on the inflated expectations created by researchers and the media. Fundamental technical barriers like “combinatorial explosion” and hardware limitations played major roles.

Is today’s AI “General Intelligence” (AGI)?

No. Most modern AI is “narrow”—it excels at specific tasks (like writing code or recognizing images) but lacks the broad, flexible understanding and consciousness of a human.

What is the biggest lesson from AI history?

Progress is not linear. Breakthroughs happen when strong ideas meet practical enablers: massive data, high compute power, and real-world deployment.

Conclusion

The journey from the “mechanical man” of myth to the “agentic assistant” of 2026 has been defined by a constant struggle between precision and adaptability. While the current AI boom has stronger foundations than previous cycles—due to deep economic integration and unprecedented data—we must remain vigilant.

The quest for Artificial General Intelligence (AGI) is no longer a distant philosophical dream but an unfolding technical reality. As we move forward, the focus must shift from merely building smarter machines to ensuring those machines remain aligned with human purpose and safety. History shows us that while the “winters” are cold, the “springs” of AI have the power to fundamentally reshape human civilization

Sources

- https://www.ibm.com/think/topics/history-of-artificial-intelligence

- https://deepmind.google/research/breakthroughs/alphago/

- https://ai.stanford.edu/~nilsson/OnlinePubs-Nils/shakey-the-robot.pdf

- https://www.coursera.org/articles/history-of-ai

- https://www.datacamp.com/blog/ai-winter

- https://www.researchgate.net/publication/372842685_Early_AI_in_Britain_Turing_et_al

- https://swisscyberinstitute.com/blog/history-artificial-intelligence/

- https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence

- https://huggingface.co/spaces/2025-ai-timeline/2025-ai-timeline

- https://www.pinecone.io/learn/series/image-search/imagenet/

- https://brewminate.com/dreams-of-the-thinking-machine-the-artificial-intelligence-boom-of-the-1980s/

- https://toloka.ai/blog/history-of-generative-ai/

- https://st.llnl.gov/news/look-back/birth-artificial-intelligence-ai-research

- https://www.egain.com/blog/learnings-from-a-brief-history-of-ai-and-knowledge-management/

- https://www.tableau.com/data-insights/ai/history

- https://openai.com/research/