If you feel like you are swimming in a “sea of acronyms,” you aren’t alone. In the modern business world, terms like Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL) have evolved from technical jargon to boardroom essentials. While these terms are often used interchangeably, they represent different layers of technology that are shaping our future.

Think of these technologies as a series of overlapping concentric circles. Artificial Intelligence is the largest circle, representing the overarching goal of making machines smart. Inside that sits Machine Learning, which is the method used to achieve that intelligence by learning from data. Finally, the smallest circle is Deep Learning, a specialized subfield of machine learning that uses complex networks to mimic how the human brain works.

What is Artificial Intelligence (AI)?

Artificial Intelligence is the broadest category in this family. At its core, AI refers to any system or process that mimics human cognitive functions, such as learning, reasoning, problem-solving, and perception. It is a multidisciplinary field drawing from computer science, mathematics, psychology, and neuroscience.

In the past, AI was often “reactive,” meaning it relied on a limited set of pre-programmed rules. For example, the famous chess-playing computer Deep Blue could beat a world champion by calculating moves from a massive library, but it couldn’t “learn” or improve on its own without human updates.

The Three Main Categories of AI

To understand where we are today, it helps to look at the three levels of AI capability:

- Artificial Narrow Intelligence (ANI): Also known as “Weak AI,” this is the only type of AI that currently exists. ANI is designed to handle specific tasks, such as winning a game of chess, identifying faces in photos, or powering virtual assistants like Siri and Alexa.

- Artificial General Intelligence (AGI): This is “Strong AI” and remains theoretical. AGI would perform on par with a human across any intellectual task, possessing the ability to interpret tone and emotion.

- Artificial Super Intelligence (ASI): This is a hypothetical level of intelligence that would surpass human ability in every way. While research is ongoing, ASI does not yet exist and often raises ethical and existential questions.

What is Machine Learning (ML)?

Machine Learning is a subset of AI that provides systems the ability to automatically learn and improve from experience without being explicitly programmed. Instead of a human writing every single rule, we feed the machine large amounts of data, and it uses algorithms to find patterns and make predictions.

The more data a machine parses, the better it becomes at its task. This is why your Spotify or Netflix recommendations get more accurate the more you use the service; the machine is learning your preferences in real-time.

The Four Primary Types of Machine Learning

Experts generally break ML down into four operational playbooks:

- Supervised Learning: This uses labeled data to teach the algorithm how to predict outcomes. For example, if you want a machine to predict housing prices, you give it data where features (like square footage) are already labeled with the final price.

- Unsupervised Learning: Here, the algorithm explores unlabeled data to find hidden patterns or groupings. A business might use this to segment customers into different groups based on similar buying behaviors without knowing those groups existed beforehand.

- Semi-Supervised Learning: This is a hybrid approach that uses a small amount of labeled data combined with a large amount of unlabeled data. It is particularly useful when labeling data is too time-consuming or expensive for human experts.

- Reinforcement Learning: This is a trial-and-error method. The AI agent takes an action in an environment and receives either a “reward” or a “penalty”. Over time, it optimizes its strategy to maximize its total rewards. This is how AI learns to master complex games like Go or how self-driving cars learn to navigate.

What is Deep Learning (DL)?

Deep Learning is a subfield of machine learning that is fueled by breakthroughs in perception and pattern recognition. What makes it “deep” is the use of artificial neural networks with many layers—specifically, more than three layers including input and output.

While classic machine learning often requires a human expert to manually identify important features in the data (a process called feature extraction), deep learning does this automatically. For instance, if you want a machine to tell the difference between a picture of a pizza and a taco, a classic ML model might need a human to explain that “crust” is a distinguishing feature. A deep learning model can ingest the raw images and figure that out on its own.

How Neural Networks Power Deep Learning

Neural networks are inspired by the human brain’s interconnected neurons. They consist of:

- Input Layer: Where the raw data enters the system.

- Hidden Layers: Where the actual “learning” and complex calculations happen. The “depth” of these layers is what distinguishes a deep learning algorithm.

- Output Layer: Where the final decision or prediction is made.

Each “node” in these layers has a specific weight and threshold. If the output of a node is high enough, it activates and sends data to the next layer in the network.

Major Deep Learning Models

- Convolutional Neural Networks (CNNs): These are the gold standard for image recognition and classification. They excel at detecting edges, curves, and abstract features in visual data.

- Recurrent Neural Networks (RNNs): These are designed for sequential data, such as language and time series. They “remember” previous inputs, which makes them perfect for speech-to-text applications and chatbots.

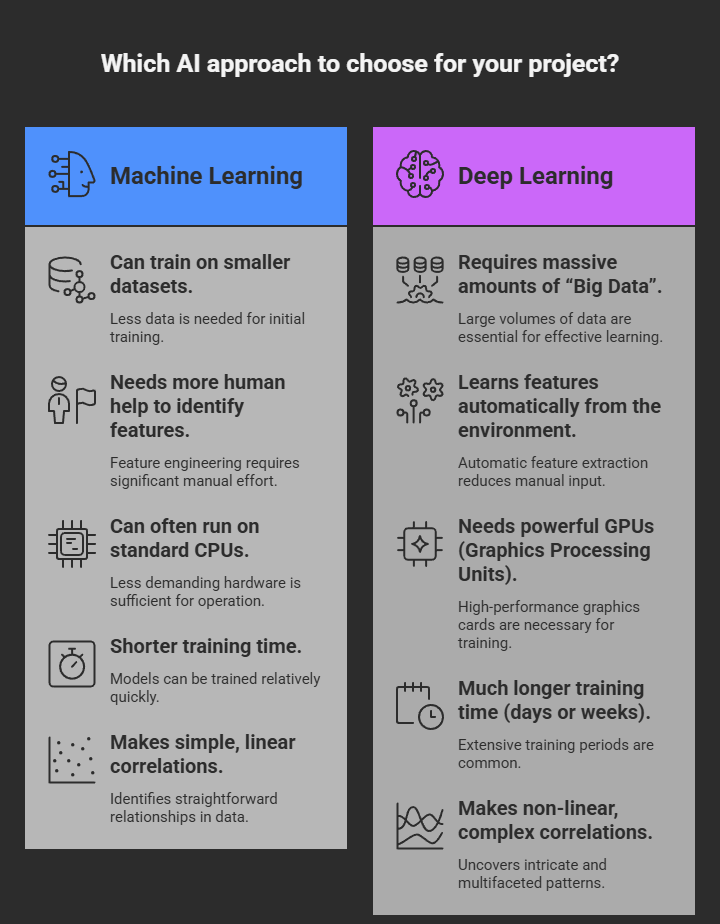

Side-by-Side Comparison: ML vs. DL

To help you distinguish between the two subsets, here is a quick comparison:

| Feature | Machine Learning (ML) | Deep Learning (DL) |

|---|---|---|

| Data Requirements | Can train on smaller datasets. | Requires massive amounts of “Big Data”. |

| Human Intervention | Needs more human help to identify features. | Learns features automatically from the environment. |

| Hardware | Can often run on standard CPUs. | Needs powerful GPUs (Graphics Processing Units). |

| Training Time | Shorter training time. | Much longer training time (days or weeks). |

| Complexity | Makes simple, linear correlations. | Makes non-linear, complex correlations. |

Real-World Applications

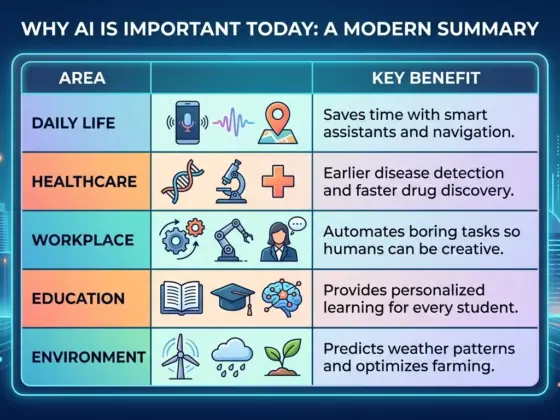

These technologies are no longer just for scientists; they are integrated into our daily lives and major industries.

1. Healthcare Diagnostics

Deep learning has revolutionized medical imaging. AI systems can now analyze chest X-rays or MRI scans in roughly 45 seconds—compared to 15-20 minutes for a human radiologist—with some models achieving 94% sensitivity in detecting diseases like pneumonia.

2. Finance and Fraud Detection

Financial institutions use machine learning to monitor millions of transactions in real-time. Algorithms can flag suspicious activity within milliseconds by analyzing purchasing patterns, saving banks billions of dollars annually.

3. Transportation and Autonomous Vehicles

Self-driving cars rely on computer vision (a form of deep learning) to process up to 2,000 frames per second from multiple cameras. This allows them to detect pedestrians, traffic signs, and obstacles instantly.

4. Smart Assistants and Recommendations

Virtual agents like Siri, Alexa, and Google Assistant use natural language processing (NLP) to understand and respond to human speech. Meanwhile, recommendation engines drive 35% of Amazon’s sales and 75% of Netflix views.

Ethical and Societal Challenges

As these systems become more powerful, they also introduce significant challenges.

- Algorithmic Bias: If an AI is trained on biased data, it will produce biased results. For example, some facial recognition systems have shown significantly lower accuracy for certain demographics.

- The “Black Box” Problem: Deep neural networks are so complex that it can be difficult for humans to understand why they made a specific decision. Ensuring explainability is now a major focus for researchers and policymakers.

- Data Privacy: AI requires massive amounts of data, which raises concerns about how that information is collected and protected.

Frequently Asked Questions (FAQs)

Q: Do I need to be a programmer to use AI? A: Not necessarily. Many “no-code” platforms now allow businesses to deploy AI and ML models without writing a single line of code.

Q: Is Machine Learning a good career? A: Yes. Demand for ML engineers is growing rapidly, with significant year-on-year increases in hiring reported globally.

Q: Will AI replace all human jobs? A: While some tasks will be automated, many experts believe the future lies in human-AI collaboration, where AI tools boost productivity rather than simply replacing workers.