Artificial Intelligence (AI) is no longer a futuristic concept from a Hollywood movie; it is part of our daily lives, from the way we commute to how we choose what to watch on a Friday night. Whether you are a 10th-grade student curious about technology or a business professional looking to stay ahead, understanding how AI works is essential.

To truly grasp the vast capabilities of AI, we must look at how these systems are classified. While experts often talk about “Narrow” or “General” AI (which refers to their overall capacity), another crucial way to group them is by their functionality. This classification focuses on how an AI processes data, whether it has a memory, and how it interacts with the human world.

In this guide, we will break down the four primary types of AI based on functionality: Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI.

What Does “Functionality-Based” AI Mean?

Before we dive into the specific types, let’s define the core concept. Functionality-based AI refers to the evolutionary stages of how an AI system interacts with its environment. It asks:

- Does the machine remember the past?

- Does it only react to what is happening right now?

- Can it understand human emotions?

Currently, we live in a world dominated by the first two types, while the last two remain largely theoretical or in early development.

1. Reactive Machines: The Simplest Level

Reactive machines are the most basic form of AI. As the name suggests, they simply react to immediate inputs. They have no memory and cannot use past experiences to inform current decisions.

How They Work

Think of a reactive machine like a calculator or a simple spam filter. When you give it a specific input, it follows pre-defined rules to give you a specific output. It doesn’t “know” that it helped you yesterday; it starts from scratch every single time.

Key Characteristics:

- No Memory: They cannot store data for future use.

- Task-Specific: They are built for one job and one job only.

- Consistent: An identical input will always produce the same output.

Real-World Examples:

- IBM’s Deep Blue: This famous computer defeated world chess champion Garry Kasparov in 1997. It could analyze pieces on the board and predict the best moves, but it had no memory of past games.

- Google’s AlphaGo: While more advanced, it still operates as a reactive system that masters the game of Go by analyzing current board states.

- Netflix Recommendation Engines: (In their simplest form) They look at what you are watching now to suggest something similar without necessarily building a long-term “world model” of you.

2. Limited Memory AI: Learning from the Short-Term

Limited Memory AI is the next step in evolution and represents the majority of AI we use today. Unlike reactive machines, these systems can look into the past—but only for a short period.

How They Work

These systems store recent data and use it as a reference for making better decisions. They are often powered by Deep Learning and neural networks, which allow them to “get smarter” the more data they are trained on.

Key Characteristics:

- Short-Term Storage: They retain data for a limited duration to improve performance.

- Decision Context: They use a mix of present data and recent history to act.

- Predictive Power: They can forecast outcomes, like predicting the next word in a sentence.

Real-World Examples:

- Self-Driving Cars: These are the gold standard for Limited Memory AI. They observe the speed and direction of nearby cars, recognize traffic lights, and track lane markings to make safe turns.

- Virtual Assistants (Siri, Alexa, ChatGPT): These tools remember the previous parts of your conversation to maintain context, so you don’t have to repeat yourself every time you ask a follow-up question.

- Chatbots: Many modern customer service bots use past interactions within a session to provide relevant answers.

3. Theory of Mind AI: The Social Frontier

This is where AI starts to get “human.” Theory of Mind AI is currently in the research and development phase. The goal is to create machines that understand that humans (and other entities) have their own thoughts, beliefs, and emotions.

The Big Leap

Current AI, like ChatGPT, can simulate empathy if you tell it you’re sad, but it doesn’t actually “understand” your feelings. A Theory of Mind AI would be able to sense your frustration or joy and change its behavior accordingly, just like a human friend would.

Key Aspirations:

- Emotional Intelligence: Recognizing and responding to human social cues.

- Intentionality: Understanding why a person is saying or doing something.

- Social Interaction: The ability to function as a social member of a group.

Potential Applications:

- Mental Health Bots: Assistants that can truly empathize with a patient’s emotional state.

- Advanced Social Robots: Robots that can care for the elderly by recognizing when they are lonely or in pain.

4. Self-Aware AI: The Ultimate Goal (and Mystery)

The final type of AI is Self-Aware AI. This is purely theoretical and hypothetical—it does not exist yet. A self-aware AI would not only understand emotions but would also have consciousness and a sense of self.

What Would It Look Like?

If a Theory of Mind AI says, “I know you are hungry,” a Self-Aware AI might say, “I know I am hungry too” (if it had a body) or “I understand my own existence as a machine”. These systems would be able to sense their own internal states and predict the feelings of others based on their own experiences.

Characteristics (Hypothetical):

- Consciousness: A sense of “I”.

- Independent Opinions: The ability to form thoughts and desires that weren’t programmed into them.

- Self-Reflection: Thinking about their own thought processes.

Future Outlook:

Many researchers see this as the “Grand Finale” of AI development. However, it raises massive ethical questions about machine rights and safety that we are only beginning to discuss.

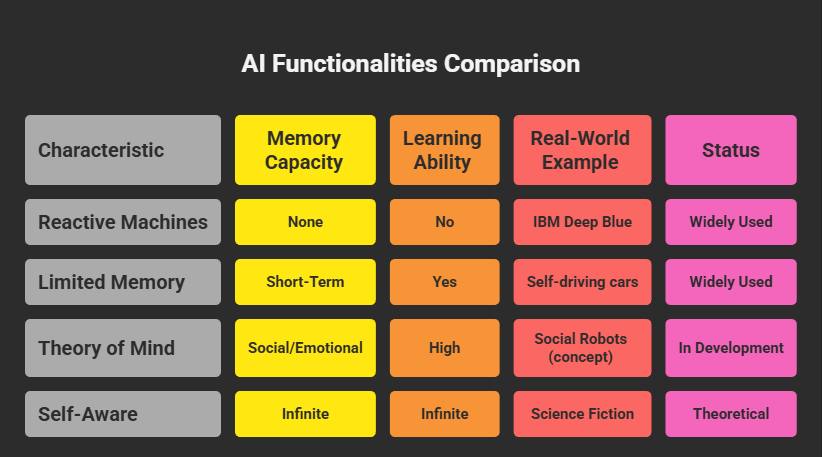

Comparison Table: AI Functionalities at a Glance

| Type | Memory Capacity | Learning Ability | Real-World Example | Status |

|---|---|---|---|---|

| Reactive Machines | None | No | IBM Deep Blue | Widely Used |

| Limited Memory | Short-Term | Yes | Self-driving cars | Widely Used |

| Theory of Mind | Social/Emotional | High | Social Robots (concept) | In Development |

| Self-Aware | Conscious | Infinite | Science Fiction | Theoretical |

Frequently Asked Questions

Q: Is ChatGPT self-aware?

A: No. ChatGPT is a highly advanced Limited Memory AI. While it can mimic conversation perfectly, it does not have feelings, a soul, or a conscious understanding of its own existence.

Q: Are self-driving cars reactive machines?

A: No, they are Limited Memory systems. They must remember the speed of the car next to them from two seconds ago to decide if they can safely change lanes.

Q: When will we see Theory of Mind AI?

A: We are seeing early glimpses through “Affective Computing” (AI that detects emotions via facial expressions), but true Theory of Mind is likely years or even decades away.

Q: Is AI dangerous?

A: The risk currently lies in bias and privacy rather than a “Terminator” scenario. Because Limited Memory AI learns from human data, it can inherit human prejudices.

Sources

The Bioscan – AI Systems Research: www.thebioscan.com

IJARCCE – Analysis of AI Types (DOI): https://doi.org/10.17148/IJARCCE.2025.14221

Bhashini (National Language Translation Mission): https://bhashini.gov.in/

Classroom Screen: https://classroomscreen.com/