Artificial intelligence has moved from the pages of science fiction into the fabric of our everyday lives. Whether you are using ChatGPT to brainstorm ideas, relying on automation to manage your emails, or noticing how your social media feed seems to know exactly what you like, AI is the engine driving these modern experiences. However, for many beginners, the rapid influx of new technology comes with a confusing barrier of technical jargon. You might hear experts talk about “LLMs,” “parameters,” and “neural networks” and feel like you are listening to a foreign language.

1. Introduction

This guide is designed to solve that problem. By breaking down 101+ AI terms into simple, plain language, this compendium will give you a solid foundation of knowledge. You will learn core concepts, the basics of how machines learn, and the real-world terminology needed to navigate the evolving digital landscape

2. What is Artificial Intelligence? (Quick Overview)

At its most basic level, Artificial Intelligence (AI) is a field of computer science and engineering devoted to creating systems capable of performing tasks that normally require human intelligence. This includes abilities like learning from experience, recognizing complex patterns, understanding human language, and making independent decisions.

It is helpful to visualize the relationship between different AI concepts as nested circles.

- AI is the largest circle, representing the broad goal of creating intelligent machines.

- Machine Learning (ML) sits inside AI; it is a specific method where computers learn from data rather than following rigid, hand-coded instructions.

- Deep Learning is a subset of ML that uses “deep” neural networks to mimic the decision-making power of the human brain.

- Generative AI is the newest layer, focusing on models that can create entirely original content like text, images, and video.

Real-world examples are everywhere: voice assistants like Siri or Alexa use AI to understand your speech, and recommendation systems on platforms like Netflix or Spotify use it to predict which movie or song you will enjoy next.

3. Why Learning AI Terms is Important

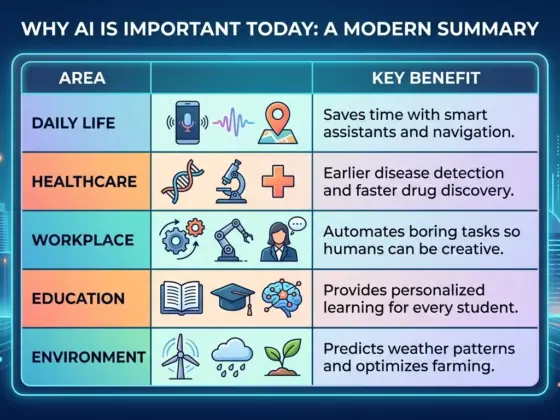

Mastering AI vocabulary is the first step toward remaining competitive in the digital age. Understanding these terms allows you to use AI tools—such as ChatGPT, Claude, or Midjourney—much more effectively.

This knowledge is essential for various groups:

- Students need it to participate in research and online courses.

- Bloggers and Creators use it to understand how “generative” tools create content and how to avoid “AI slop”.

- Developers require these terms to build and integrate AI into new applications.

- Business Owners must understand the distinction between different types of AI to make informed decisions about technology investments and strategy.

4. Core AI Terms (Basic Level – Beginner Friendly)

4.1 General AI Terms

- Artificial Intelligence (AI): The capability of a machine to simulate human-like behavior, such as reasoning and problem-solving.

- Algorithm: A chronological series of step-by-step instructions that guide a computer to solve an issue or accomplish a task.

- Model: A trained AI system (like GPT-4) that has learned to perform a task after being exposed to data.

- Data: The information used to teach an AI how to recognize patterns; it is the “fuel” that makes the system work.

- Training: The process where an AI model learns from a dataset to improve its performance.

- Prediction: The stage where a model applies what it has learned to make a “best guess” or generate a response to new information.

- Automation: Using technology to perform repetitive tasks without human intervention, such as in manufacturing or customer service.

- Neural Network: A computing system inspired by the human brain, made of layers of connected “nodes” that process information.

- Dataset: A large collection of observations or data points used to train or test a model.

- Big Data: Extremely large sets of information that are too complex for traditional software to manage, providing the foundation for modern AI training.

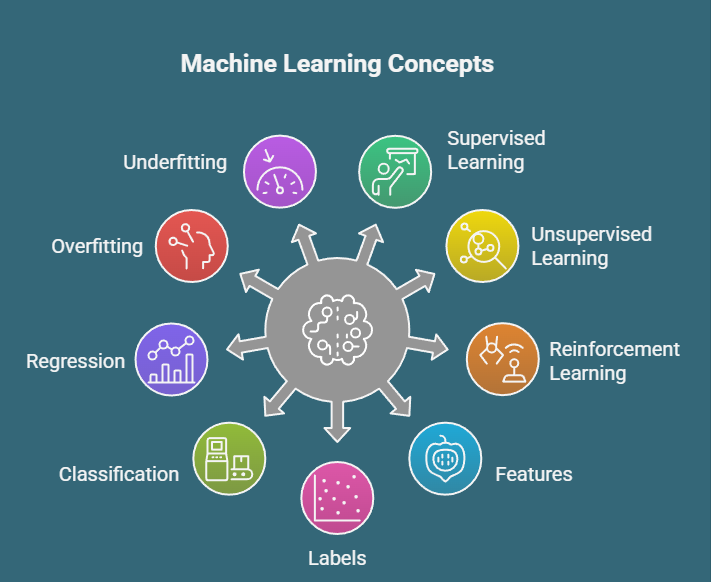

4.2 Machine Learning Terms

- Machine Learning (ML): A type of AI where computers gain knowledge from data experience rather than being explicitly programmed for every scenario.

- Supervised Learning: Training a model using “labeled” data, where the computer is shown the correct answers by a “teacher”.

- Unsupervised Learning: A method where the model finds patterns in data on its own, without labels or a guide.

- Reinforcement Learning (RL): A trial-and-error approach where an AI learns by being rewarded for good actions and penalized for bad ones.

- Features: The specific characteristics or attributes of data that a model analyzes (e.g., the color or shape of a fruit).

- Labels: The “answers” or categories assigned to data points during supervised training (e.g., labeling a photo as a “cat”).

- Classification: A task where the AI sorts data into specific categories, such as identifying an email as “spam” or “not spam”.

- Regression: A task where the AI predicts a continuous numeric value, such as forecasting house prices or temperatures.

- Overfitting: A mistake where a model memorizes the training data too perfectly, causing it to fail when it sees new, real-world information.

- Underfitting: When a model is too simple to capture the underlying patterns in the data.

4.3 Deep Learning Terms

- Deep Learning: An advanced form of ML that uses many layers of neural networks to solve complex problems.

- Neural Network Layers: The structure of a network, typically consisting of an Input Layer, several Hidden Layers, and an Output Layer.

- Input Layer: The first layer of a network that receives raw data.

- Hidden Layer: The middle layers where most of the complex mathematical processing and feature extraction happen.

- Output Layer: The final layer that produces the prediction or result.

- Activation Function: A mathematical formula that determines whether a specific neuron in the network should “fire” or activate.

- Backpropagation: The method used to tune the model’s internal settings by moving “backward” from an error to adjust the network’s weights.

- Epoch: One complete pass of the entire training dataset through the neural network.

- Batch Size: The number of training examples processed by the model in one single iteration.

5. Advanced AI Terms (Intermediate Level)

5.1 NLP (Natural Language Processing) Terms

- Natural Language Processing (NLP): The field of AI that enables machines to understand, interpret, and generate human language.

- Tokenization: The process of breaking down text into smaller, machine-readable units called “tokens” (which can be words, characters, or parts of words).

- Sentiment Analysis: Using AI to determine the emotional tone of text, such as whether a social media post is positive, negative, or neutral.

- Named Entity Recognition (NER): Automatically identifying and categorizing specific entities in text, such as names of people, places, or dates.

- Language Model: A system trained to estimate the likelihood of a sequence of words, effectively predicting what word comes next.

- Transformer: A groundbreaking neural network architecture that uses an “attention mechanism” to process data in parallel, forming the basis of models like GPT.

- Prompt Engineering: The skill of crafting specific questions or instructions to get the best possible response from an AI.

5.2 Computer Vision Terms

- Computer Vision: The branch of AI that allows machines to interpret and analyze visual information from the world, such as images and video.

- Image Recognition: The ability of AI to identify what objects, people, or scenes are present in a photograph.

- Object Detection: A step beyond recognition that involves not just identifying an object but also locating its specific position within an image.

- Facial Recognition: A technology used to identify or verify a person’s identity by analyzing their facial features in a photo or video.

- OCR (Optical Character Recognition): Technology that scans images or documents and extracts the printed or written text from them.

5.3 Generative AI Terms

- Generative AI: AI models designed to create new, original content—ranging from text and code to images and music—based on patterns they have learned.

- Large Language Model (LLM): A massive AI model trained on trillions of words to understand and generate human-like language.

- Prompt: The user input—a question or instruction—that tells a generative AI what to produce.

- Fine-tuning: Taking a model that has already been trained and giving it additional, specific data to make it better at a particular task.

- Diffusion Models: A technology often used in image generators that creates an image by starting with random “noise” and gradually refining it into a clear picture.

- GAN (Generative Adversarial Network): A system of two competing neural networks—a “Generator” and a “Discriminator”—that work together to create highly realistic data.

6. AI Tools & Technology Terms

- API (Application Programming Interface): The “invisible wiring” that allows different software programs to communicate with each other, such as an app using the OpenAI API to run a chatbot.

- Cloud Computing: Running AI processes on powerful remote servers (like those owned by Google or Amazon) rather than on your own local computer.

- Edge AI: Running AI directly on local devices, such as your smartphone or a smart home camera, which improves speed and privacy.

- Open Source AI: AI models or software whose code is public and can be used, modified, and shared by anyone (e.g., Llama).

- Proprietary Models: AI models that are owned and controlled by a specific company and are not available for public modification (e.g., GPT-4).

- AI Frameworks: Software libraries like TensorFlow and PyTorch that provide the building blocks developers use to create AI models.

7. AI Ethics & Risks Terminology

- Bias in AI: When a model produces prejudiced or unfair results because it learned from data that contained human biases.

- AI Ethics: The multidisciplinary field that studies how to maximize the benefits of AI while reducing risks like discrimination or misuse.

- Data Privacy: The set of principles and laws (like GDPR) designed to protect a person’s digital information from being misused by AI systems.

- Hallucination: A phenomenon where an AI confidently generates information that is completely false, inaccurate, or nonsensical.

- Explainability (XAI): The goal of making AI decision-making processes transparent and understandable to humans, rather than being a “black box”.

- AI Safety: Research focused on ensuring that AI systems act according to human values and do not cause minor or catastrophic harm.

8. Real-World AI Use Case Terms

- Recommendation System: Algorithms that analyze your past behavior to suggest products, music, or movies you might like.

- Chatbot: A computer program designed to simulate conversation with human users, often used in customer service.

- Autonomous Vehicles: Self-driving cars that use AI to sense their surroundings and navigate without a human driver.

- Predictive Analytics: Using data and AI to identify the likelihood of future outcomes based on historical trends.

- Speech Recognition: Technology that allows a machine to identify and process spoken language into text.

- Fraud Detection: AI systems that monitor financial transactions in real-time to flag unusual activity and prevent theft.

9. 101+ AI Terms List (Quick Reference Section)

To help you cross the 101+ threshold, here is a concise reference for additional terms found in modern AI discussions:

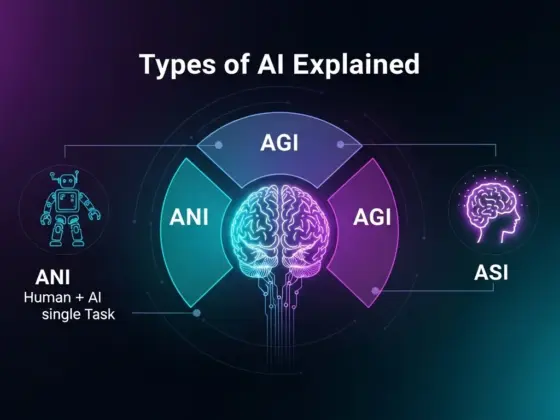

- AGI (Artificial General Intelligence): A theoretical AI that matches human capabilities across all cognitive tasks.

- ASI (Artificial Superintelligence): A hypothetical AI that surpasses human intelligence in every possible domain.

- ANI (Artificial Narrow Intelligence): AI designed for one specific task; all current AI is ANI.

- Agentic AI / AI Agents: Systems that can act autonomously to complete multi-step tasks independently.

- Alignment: Ensuring AI understands and respects human values.

- AI Slop: Low-quality, generic content mass-produced by AI.

- Parameter: The internal “settings” of a model that store its learned knowledge.

- Context Window: The amount of text an AI model can “see” or remember at once.

- RAG (Retrieval-Augmented Generation): A technique where an AI searches external documents to provide more accurate answers.

- MoE (Mixture of Experts): A model architecture that uses only a fraction of its total “brain” for each request to save energy.

- Multimodal AI: A model that can process multiple types of data simultaneously, such as text, images, and audio.

- Temperature: A setting that controls how “creative” or “predictable” an AI’s response is.

- Word Embedding: Representing words as numbers so AI can understand their semantic meaning.

- Vector Database: A specialized database optimized for finding information based on meaning rather than just keywords.

- Zero-shot Learning: When a model performs a task without being given any specific examples.

- Few-shot Learning: When a model is given a small number of examples to learn a new task quickly.

- One-shot Learning: A variation of few-shot where only a single example is provided.

- Quantization: Reducing the precision of a model’s numbers to make it run faster on smaller devices.

- Distillation: Transferring knowledge from a large model to a smaller, more efficient one.

- Transfer Learning: Applying knowledge gained from solving one problem to a different but related problem.

- Latent Space: The mathematical space where a model organizes different concepts relative to each other.

- Hyperparameter: Settings configured by human developers before training starts to control the learning process.

- Chain of Thought (CoT): Encouraging the AI to “think out loud” step-by-step before giving a final answer.

- System Prompt: Hidden instructions that steer an AI’s overall behavior and persona.

- Guardrails: Safety filters that block illegal or unwanted AI outputs.

- Benchmark: Standardized tests used to compare the performance of different AI models.

- Jailbreaking: Attempts by users to bypass an AI’s safety restrictions.

- Scaling Laws: The discovery that AI models get predictably better as they get more data and compute power.

- Synthetic Data: Training data that was itself generated by another AI.

- Turing Test: A classic assessment of whether a machine can exhibit behavior indistinguishable from a human.

- Vibe Coding: A term describing coding with AI by describing goals rather than writing every line of code.

- Reasoning Models: AI specifically fine-tuned to deliberate and verify its own logic.

- Test-time Compute: Spending more time processing at the moment of generating an answer to improve accuracy.

- World Model: An AI that can predict future states of its environment based on actions.

- KV Cache: A memory optimization that reuses previous calculations during text generation.

- Weights: The learned values that determine the strength of connections between neurons in a network.

- Biases (Parameter): Built-in values that shift a neuron’s activation threshold.

- Orchestration: Coordinating multiple AI tools or agents into a unified workflow.

- Prompt Injection: A security risk where a user tries to hijack a model’s instructions.

- RLHF: Reinforcement Learning from Human Feedback, used to make chatbots more helpful.

- Self-Supervised Learning: When a model learns patterns from unlabeled data by hiding parts of it and trying to predict them.

- Transformer Block: A single layer of the transformer architecture.

- Attention Mechanism: The part of a transformer that decides which words in a sentence are most relevant to each other.

- Embeddings: The mathematical vectors that represent the meaning of tokens.

- GPU: Graphics Processing Unit, the high-powered hardware used for AI training.

- Edge Computing: Processing data closer to where it is collected to save time and energy.

- Foundation Model: A massive base model that can be adapted for many different tasks.

- Instruction Tuning: Training a model specifically to follow user commands.

- Linear Regression: A basic algorithm for predicting a number based on relationships.

- Logistic Regression: A basic algorithm for predicting a category (usually yes/no).

- Decision Tree: A flowchart-like structure used for making predictions.

- Random Forest: An ensemble method that combines many decision trees for better accuracy.

- SVM (Support Vector Machine): An algorithm used to classify data into complex groups.

- K-Nearest Neighbor: Classifying data based on how close it is to other examples.

- Clustering: Grouping similar data points together in unsupervised learning.

- Dimensionality Reduction: Simplifying data by removing less important features.

- PCA (Principal Component Analysis): A common technique for simplifying complex datasets.

- Manifold: A mathematical concept often used to describe the underlying structure of data.

- Loss Function: A mathematical score that tells the model how “wrong” its predictions are.

- Gradient Descent: The primary optimization method used to reduce the error in a model.

- Stochastic Gradient Descent: A faster version of gradient descent that updates weights after every example.

- Learning Rate: A setting that controls how much the model’s weights change during each training step.

- Softmax: A mathematical function that converts the model’s output into a set of probabilities.

- ReLU (Rectified Linear Unit): A popular activation function used in deep learning.

- Sigmoid: An activation function that maps any value into a range between 0 and 1.

- Dropout: A technique used during training to prevent overfitting by randomly “turning off” neurons.

- Regularization: Techniques used to keep a model simple and prevent it from becoming too complex.

- Preprocessing: Cleaning and formatting data before it is fed into an AI model.

- Inference: The process of using a trained model to generate an answer in real-time.

- Latency: The delay or “lag” time between giving a prompt and receiving a response.

- Throughput: The amount of data or tokens an AI can process per second.

- Perplexity: A measurement of how well a probability model predicts a sample.

- LLMOps: The set of practices for managing the lifecycle of large language models.

- Model Drift: When an AI’s performance degrades over time as real-world data changes.

- Data Leakage: A mistake where information from the test dataset “leaks” into the training dataset.

- Red Teaming: Intentionally trying to break or trick an AI to find its security flaws.

- Prompt Injection: A malicious prompt designed to override a model’s core instructions.

- Black Box: A system whose internal decision-making is too complex for humans to easily understand.

- Transparent AI: Systems designed to be easily auditable and understandable.

- Accountability: The principle that humans are responsible for the actions of the AI they deploy.

- Bias Mitigation: Active steps taken to reduce prejudice in an AI system.

- Fairness: Ensuring that AI systems treat all groups of people equally.

- Sustainability: Focusing on reducing the massive energy and water consumption of AI data centers.

10. How to Learn AI Faster (Beginner Tips)

Learning AI does not have to be an overnight marathon. To progress quickly:

- Start with the basics: Ensure you understand the difference between AI, ML, and Deep Learning before diving into complex math.

- Use AI tools practically: The best way to learn is by doing. Experiment with ChatGPT or Claude to see how different prompts change the results.

- Follow reliable blogs: Staying updated with expert insights (like this one!) helps you keep pace with the 2026 landscape.

- Watch tutorials: Visual learners can benefit from step-by-step video guides on neural networks or prompt engineering.

- Practice prompt writing: Mastering “Prompt Engineering” will immediately improve your experience with every generative tool.

11. Common Mistakes Beginners Make

- Trying advanced topics too early: Jumping into ” Mixture of Experts” before understanding a “Neural Network” can lead to burnout.

- Ignoring fundamentals: You do not need a computer science degree, but ignoring how models use “Data” and “Weights” makes it harder to troubleshoot issues.

- Not practicing: Reading about AI is not enough; you must interact with the models to understand their nuances.

- Believing AI is fully “intelligent”: It is important to remember that AI does not “know” or “think” like a human; it is identifying statistical patterns.

12. FAQs Section (Highly SEO Optimized)

Q1: What are AI terms?

AI terms are the technical vocabulary and acronyms (like LLM, NLP, or AGI) used to describe the technologies, architectures, and ethical considerations within the field of artificial intelligence.

Q2: How many AI terms should beginners learn?

Starting with about 10–20 core concepts (like Machine Learning, Algorithms, and Prompts) is enough to use modern tools effectively. Expanding to 101+ terms provides a professional-level foundation.

Q3: Is AI difficult to understand?

While the underlying math is complex, the core concepts can be explained simply using analogies to the human brain or daily tasks.

Q4: What is the difference between AI and ML?

AI is the broad goal of making machines smart, while Machine Learning (ML) is the specific method of using data to teach machines how to get smarter on their own.

Q5: What are the most important AI terms?

For most users, the “big five” are Artificial Intelligence, Machine Learning, Large Language Model (LLM), Neural Network, and Generative AI.

13. Conclusion

Understanding AI terminology is no longer just for scientists; it is a necessary skill for everyone living in the 21st century. By mastering this guide, you have moved from a confused observer to an informed participant in the AI revolution.

Encouragement: Bookmark this guide! AI is evolving fast, and having a reliable glossary to return to will be invaluable as new models are released in the coming years